Compare commits

1 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

0f6b46a39d |

@@ -69,7 +69,7 @@ jobs:

|

||||

- name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- name: Build and push container image (latest)

|

||||

- name: Build container image (latest)

|

||||

if: github.event_name == 'push'

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

@@ -77,11 +77,11 @@ jobs:

|

||||

tags: |

|

||||

${{ secrets.DOCKER_HUB_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.LATEST_TAG }}

|

||||

${{ secrets.PUBLIC_ECR_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.LATEST_TAG }}

|

||||

file: ${{ env.DOCKERFILE_PATH }}

|

||||

file: ${{ env.DOCKERFILE_PATH }}

|

||||

cache-from: type=gha

|

||||

cache-to: type=gha,mode=max

|

||||

|

||||

- name: Build and push container image (release)

|

||||

- name: Build container image (release)

|

||||

if: github.event_name == 'release'

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

|

||||

20

.github/workflows/pypi-release.yml

vendored

@@ -6,6 +6,7 @@ on:

|

||||

|

||||

env:

|

||||

RELEASE_TAG: ${{ github.event.release.tag_name }}

|

||||

GITHUB_BRANCH: master

|

||||

|

||||

jobs:

|

||||

release-prowler-job:

|

||||

@@ -16,7 +17,8 @@ jobs:

|

||||

steps:

|

||||

# Checks-out your repository under $GITHUB_WORKSPACE, so your job can access it

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

with:

|

||||

ref: ${{ env.GITHUB_BRANCH }}

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

pipx install poetry

|

||||

@@ -46,7 +48,6 @@ jobs:

|

||||

with:

|

||||

token: ${{ secrets.PROWLER_ACCESS_TOKEN }}

|

||||

commit-message: "chore(release): update Prowler Version to ${{ env.RELEASE_TAG }}."

|

||||

base: master

|

||||

branch: release-${{ env.RELEASE_TAG }}

|

||||

labels: "status/waiting-for-revision, severity/low"

|

||||

title: "chore(release): update Prowler Version to ${{ env.RELEASE_TAG }}"

|

||||

@@ -58,6 +59,13 @@ jobs:

|

||||

### License

|

||||

|

||||

By submitting this pull request, I confirm that my contribution is made under the terms of the Apache 2.0 license.

|

||||

# Create pull request to github.com/Homebrew/homebrew-core to update prowler formula

|

||||

- name: Bump Homebrew formula

|

||||

uses: mislav/bump-homebrew-formula-action@v2

|

||||

with:

|

||||

formula-name: prowler

|

||||

env:

|

||||

COMMITTER_TOKEN: ${{ secrets.PROWLER_ACCESS_TOKEN }}

|

||||

- name: Replicate PyPi Package

|

||||

run: |

|

||||

rm -rf ./dist && rm -rf ./build && rm -rf prowler.egg-info

|

||||

@@ -68,11 +76,3 @@ jobs:

|

||||

run: |

|

||||

poetry config pypi-token.pypi ${{ secrets.PYPI_API_TOKEN }}

|

||||

poetry publish

|

||||

# Create pull request to github.com/Homebrew/homebrew-core to update prowler formula

|

||||

- name: Bump Homebrew formula

|

||||

uses: mislav/bump-homebrew-formula-action@v2

|

||||

with:

|

||||

formula-name: prowler

|

||||

base-branch: release-${{ env.RELEASE_TAG }}

|

||||

env:

|

||||

COMMITTER_TOKEN: ${{ secrets.PROWLER_ACCESS_TOKEN }}

|

||||

|

||||

@@ -19,7 +19,6 @@ repos:

|

||||

hooks:

|

||||

- id: pretty-format-toml

|

||||

args: [--autofix]

|

||||

files: pyproject.toml

|

||||

|

||||

## BASH

|

||||

- repo: https://github.com/koalaman/shellcheck-precommit

|

||||

@@ -57,11 +56,10 @@ repos:

|

||||

args: ["--ignore=E266,W503,E203,E501,W605"]

|

||||

|

||||

- repo: https://github.com/python-poetry/poetry

|

||||

rev: 1.4.0 # add version here

|

||||

rev: 1.4.0 # add version here

|

||||

hooks:

|

||||

- id: poetry-check

|

||||

- id: poetry-lock

|

||||

args: ["--no-update"]

|

||||

|

||||

- repo: https://github.com/hadolint/hadolint

|

||||

rev: v2.12.1-beta

|

||||

@@ -76,15 +74,6 @@ repos:

|

||||

entry: bash -c 'pylint --disable=W,C,R,E -j 0 -rn -sn prowler/'

|

||||

language: system

|

||||

|

||||

- id: trufflehog

|

||||

name: TruffleHog

|

||||

description: Detect secrets in your data.

|

||||

# entry: bash -c 'trufflehog git file://. --only-verified --fail'

|

||||

# For running trufflehog in docker, use the following entry instead:

|

||||

entry: bash -c 'docker run -v "$(pwd):/workdir" -i --rm trufflesecurity/trufflehog:latest git file:///workdir --only-verified --fail'

|

||||

language: system

|

||||

stages: ["commit", "push"]

|

||||

|

||||

- id: pytest-check

|

||||

name: pytest-check

|

||||

entry: bash -c 'pytest tests -n auto'

|

||||

|

||||

43

README.md

@@ -11,10 +11,11 @@

|

||||

</p>

|

||||

<p align="center">

|

||||

<a href="https://join.slack.com/t/prowler-workspace/shared_invite/zt-1hix76xsl-2uq222JIXrC7Q8It~9ZNog"><img alt="Slack Shield" src="https://img.shields.io/badge/slack-prowler-brightgreen.svg?logo=slack"></a>

|

||||

<a href="https://pypi.org/project/prowler/"><img alt="Python Version" src="https://img.shields.io/pypi/v/prowler.svg"></a>

|

||||

<a href="https://pypi.python.org/pypi/prowler/"><img alt="Python Version" src="https://img.shields.io/pypi/pyversions/prowler.svg"></a>

|

||||

<a href="https://pypi.org/project/prowler-cloud/"><img alt="Python Version" src="https://img.shields.io/pypi/v/prowler.svg"></a>

|

||||

<a href="https://pypi.python.org/pypi/prowler-cloud/"><img alt="Python Version" src="https://img.shields.io/pypi/pyversions/prowler.svg"></a>

|

||||

<a href="https://pypistats.org/packages/prowler"><img alt="PyPI Prowler Downloads" src="https://img.shields.io/pypi/dw/prowler.svg?label=prowler%20downloads"></a>

|

||||

<a href="https://pypistats.org/packages/prowler-cloud"><img alt="PyPI Prowler-Cloud Downloads" src="https://img.shields.io/pypi/dw/prowler-cloud.svg?label=prowler-cloud%20downloads"></a>

|

||||

<a href="https://formulae.brew.sh/formula/prowler#default"><img alt="Brew Prowler Downloads" src="https://img.shields.io/homebrew/installs/dm/prowler?label=brew%20downloads"></a>

|

||||

<a href="https://hub.docker.com/r/toniblyx/prowler"><img alt="Docker Pulls" src="https://img.shields.io/docker/pulls/toniblyx/prowler"></a>

|

||||

<a href="https://hub.docker.com/r/toniblyx/prowler"><img alt="Docker" src="https://img.shields.io/docker/cloud/build/toniblyx/prowler"></a>

|

||||

<a href="https://hub.docker.com/r/toniblyx/prowler"><img alt="Docker" src="https://img.shields.io/docker/image-size/toniblyx/prowler"></a>

|

||||

@@ -33,14 +34,14 @@

|

||||

|

||||

# Description

|

||||

|

||||

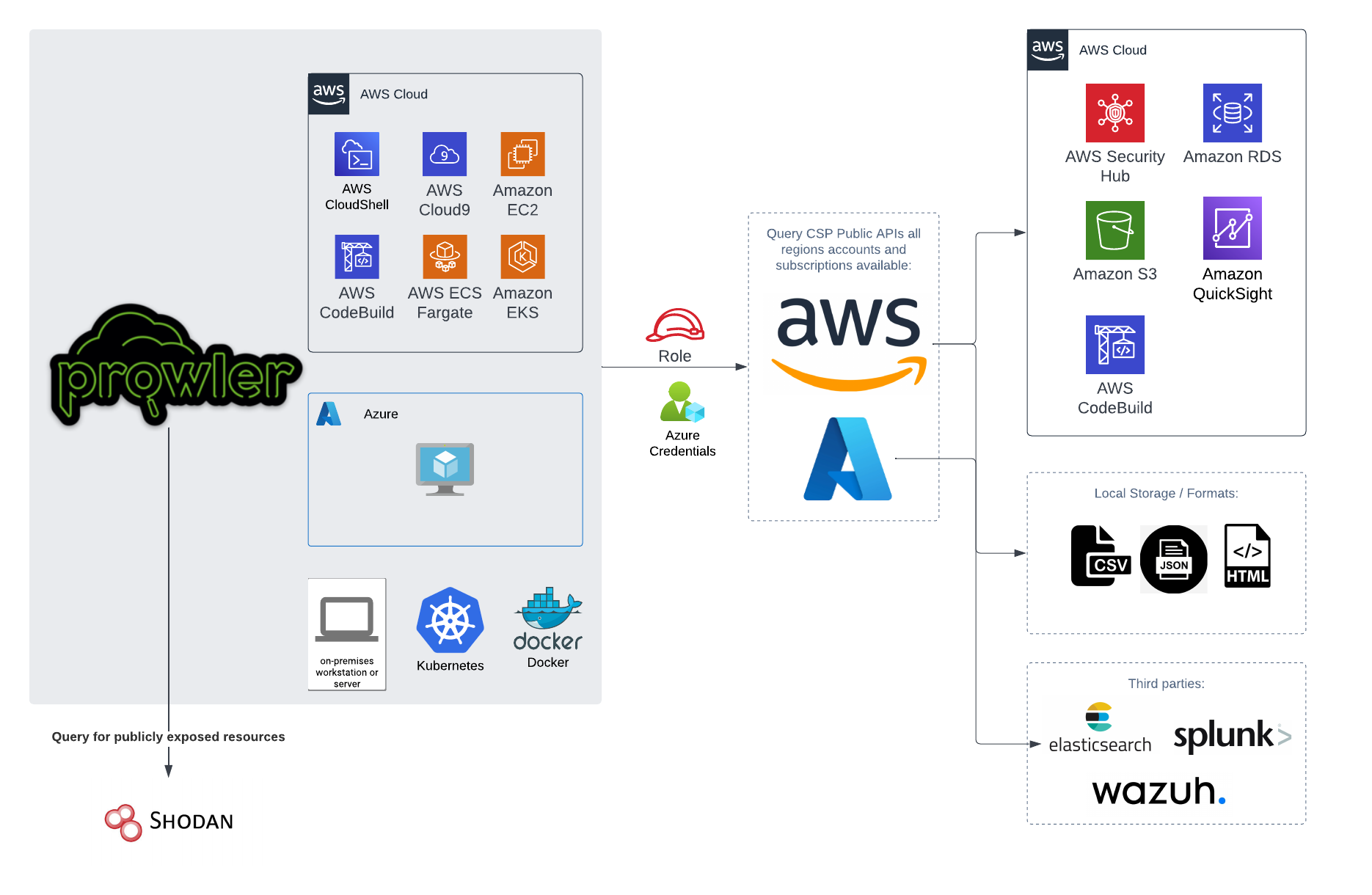

`Prowler` is an Open Source security tool to perform AWS, GCP and Azure security best practices assessments, audits, incident response, continuous monitoring, hardening and forensics readiness.

|

||||

`Prowler` is an Open Source security tool to perform AWS and Azure security best practices assessments, audits, incident response, continuous monitoring, hardening and forensics readiness.

|

||||

|

||||

It contains hundreds of controls covering CIS, PCI-DSS, ISO27001, GDPR, HIPAA, FFIEC, SOC2, AWS FTR, ENS and custom security frameworks.

|

||||

|

||||

# 📖 Documentation

|

||||

|

||||

The full documentation can now be found at [https://docs.prowler.cloud](https://docs.prowler.cloud)

|

||||

|

||||

|

||||

## Looking for Prowler v2 documentation?

|

||||

For Prowler v2 Documentation, please go to https://github.com/prowler-cloud/prowler/tree/2.12.1.

|

||||

|

||||

@@ -53,7 +54,7 @@ Prowler is available as a project in [PyPI](https://pypi.org/project/prowler-clo

|

||||

pip install prowler

|

||||

prowler -v

|

||||

```

|

||||

More details at https://docs.prowler.cloud

|

||||

More details at https://docs.prowler.cloud

|

||||

|

||||

## Containers

|

||||

|

||||

@@ -62,7 +63,7 @@ The available versions of Prowler are the following:

|

||||

- `latest`: in sync with master branch (bear in mind that it is not a stable version)

|

||||

- `<x.y.z>` (release): you can find the releases [here](https://github.com/prowler-cloud/prowler/releases), those are stable releases.

|

||||

- `stable`: this tag always point to the latest release.

|

||||

|

||||

|

||||

The container images are available here:

|

||||

|

||||

- [DockerHub](https://hub.docker.com/r/toniblyx/prowler/tags)

|

||||

@@ -84,7 +85,7 @@ python prowler.py -v

|

||||

|

||||

You can run Prowler from your workstation, an EC2 instance, Fargate or any other container, Codebuild, CloudShell and Cloud9.

|

||||

|

||||

|

||||

|

||||

|

||||

# 📝 Requirements

|

||||

|

||||

@@ -163,22 +164,6 @@ Regarding the subscription scope, Prowler by default scans all the subscriptions

|

||||

- `Reader`

|

||||

|

||||

|

||||

## Google Cloud Platform

|

||||

|

||||

Prowler will follow the same credentials search as [Google authentication libraries](https://cloud.google.com/docs/authentication/application-default-credentials#search_order):

|

||||

|

||||

1. [GOOGLE_APPLICATION_CREDENTIALS environment variable](https://cloud.google.com/docs/authentication/application-default-credentials#GAC)

|

||||

2. [User credentials set up by using the Google Cloud CLI](https://cloud.google.com/docs/authentication/application-default-credentials#personal)

|

||||

3. [The attached service account, returned by the metadata server](https://cloud.google.com/docs/authentication/application-default-credentials#attached-sa)

|

||||

|

||||

Those credentials must be associated to a user or service account with proper permissions to do all checks. To make sure, add the following roles to the member associated with the credentials:

|

||||

|

||||

- Viewer

|

||||

- Security Reviewer

|

||||

- Stackdriver Account Viewer

|

||||

|

||||

> `prowler` will scan the project associated with the credentials.

|

||||

|

||||

# 💻 Basic Usage

|

||||

|

||||

To run prowler, you will need to specify the provider (e.g aws or azure):

|

||||

@@ -253,14 +238,12 @@ prowler azure [--sp-env-auth, --az-cli-auth, --browser-auth, --managed-identity-

|

||||

```

|

||||

> By default, `prowler` will scan all Azure subscriptions.

|

||||

|

||||

## Google Cloud Platform

|

||||

|

||||

Optionally, you can provide the location of an application credential JSON file with the following argument:

|

||||

|

||||

```console

|

||||

prowler gcp --credentials-file path

|

||||

```

|

||||

# 🎉 New Features

|

||||

|

||||

- Python: we got rid of all bash and it is now all in Python.

|

||||

- Faster: huge performance improvements (same account from 2.5 hours to 4 minutes).

|

||||

- Developers and community: we have made it easier to contribute with new checks and new compliance frameworks. We also included unit tests.

|

||||

- Multi-cloud: in addition to AWS, we have added Azure, we plan to include GCP and OCI soon, let us know if you want to contribute!

|

||||

|

||||

# 📃 License

|

||||

|

||||

|

||||

@@ -79,21 +79,3 @@ Regarding the subscription scope, Prowler by default scans all the subscriptions

|

||||

|

||||

- `Security Reader`

|

||||

- `Reader`

|

||||

|

||||

## Google Cloud

|

||||

|

||||

### GCP Authentication

|

||||

|

||||

Prowler will follow the same credentials search as [Google authentication libraries](https://cloud.google.com/docs/authentication/application-default-credentials#search_order):

|

||||

|

||||

1. [GOOGLE_APPLICATION_CREDENTIALS environment variable](https://cloud.google.com/docs/authentication/application-default-credentials#GAC)

|

||||

2. [User credentials set up by using the Google Cloud CLI](https://cloud.google.com/docs/authentication/application-default-credentials#personal)

|

||||

3. [The attached service account, returned by the metadata server](https://cloud.google.com/docs/authentication/application-default-credentials#attached-sa)

|

||||

|

||||

Those credentials must be associated to a user or service account with proper permissions to do all checks. To make sure, add the following roles to the member associated with the credentials:

|

||||

|

||||

- Viewer

|

||||

- Security Reviewer

|

||||

- Stackdriver Account Viewer

|

||||

|

||||

> `prowler` will scan the project associated with the credentials.

|

||||

|

||||

|

Before Width: | Height: | Size: 283 KiB After Width: | Height: | Size: 258 KiB |

|

Before Width: | Height: | Size: 631 KiB |

|

Before Width: | Height: | Size: 320 KiB |

BIN

docs/img/quick-inventory.png

Normal file

|

After Width: | Height: | Size: 220 KiB |

@@ -16,7 +16,7 @@ For **Prowler v2 Documentation**, please go [here](https://github.com/prowler-cl

|

||||

|

||||

## About Prowler

|

||||

|

||||

**Prowler** is an Open Source security tool to perform AWS, Azure and Google Cloud security best practices assessments, audits, incident response, continuous monitoring, hardening and forensics readiness.

|

||||

**Prowler** is an Open Source security tool to perform AWS and Azure security best practices assessments, audits, incident response, continuous monitoring, hardening and forensics readiness.

|

||||

|

||||

It contains hundreds of controls covering CIS, PCI-DSS, ISO27001, GDPR, HIPAA, FFIEC, SOC2, AWS FTR, ENS and custom security frameworks.

|

||||

|

||||

@@ -40,7 +40,7 @@ Prowler is available as a project in [PyPI](https://pypi.org/project/prowler-clo

|

||||

|

||||

* `Python >= 3.9`

|

||||

* `Python pip >= 3.9`

|

||||

* AWS, GCP and/or Azure credentials

|

||||

* AWS and/or Azure credentials

|

||||

|

||||

_Commands_:

|

||||

|

||||

@@ -54,7 +54,7 @@ Prowler is available as a project in [PyPI](https://pypi.org/project/prowler-clo

|

||||

_Requirements_:

|

||||

|

||||

* Have `docker` installed: https://docs.docker.com/get-docker/.

|

||||

* AWS, GCP and/or Azure credentials

|

||||

* AWS and/or Azure credentials

|

||||

* In the command below, change `-v` to your local directory path in order to access the reports.

|

||||

|

||||

_Commands_:

|

||||

@@ -71,7 +71,7 @@ Prowler is available as a project in [PyPI](https://pypi.org/project/prowler-clo

|

||||

|

||||

_Requirements for Ubuntu 20.04.3 LTS_:

|

||||

|

||||

* AWS, GCP and/or Azure credentials

|

||||

* AWS and/or Azure credentials

|

||||

* Install python 3.9 with: `sudo apt-get install python3.9`

|

||||

* Remove python 3.8 to avoid conflicts if you can: `sudo apt-get remove python3.8`

|

||||

* Make sure you have the python3 distutils package installed: `sudo apt-get install python3-distutils`

|

||||

@@ -91,7 +91,7 @@ Prowler is available as a project in [PyPI](https://pypi.org/project/prowler-clo

|

||||

|

||||

_Requirements for Developers_:

|

||||

|

||||

* AWS, GCP and/or Azure credentials

|

||||

* AWS and/or Azure credentials

|

||||

* `git`, `Python >= 3.9`, `pip` and `poetry` installed (`pip install poetry`)

|

||||

|

||||

_Commands_:

|

||||

@@ -108,7 +108,7 @@ Prowler is available as a project in [PyPI](https://pypi.org/project/prowler-clo

|

||||

|

||||

_Requirements_:

|

||||

|

||||

* AWS, GCP and/or Azure credentials

|

||||

* AWS and/or Azure credentials

|

||||

* Latest Amazon Linux 2 should come with Python 3.9 already installed however it may need pip. Install Python pip 3.9 with: `sudo dnf install -y python3-pip`.

|

||||

* Make sure setuptools for python is already installed with: `pip3 install setuptools`

|

||||

|

||||

@@ -125,7 +125,7 @@ Prowler is available as a project in [PyPI](https://pypi.org/project/prowler-clo

|

||||

_Requirements_:

|

||||

|

||||

* `Brew` installed in your Mac or Linux

|

||||

* AWS, GCP and/or Azure credentials

|

||||

* AWS and/or Azure credentials

|

||||

|

||||

_Commands_:

|

||||

|

||||

@@ -194,7 +194,7 @@ You can run Prowler from your workstation, an EC2 instance, Fargate or any other

|

||||

|

||||

## Basic Usage

|

||||

|

||||

To run Prowler, you will need to specify the provider (e.g aws, gcp or azure):

|

||||

To run Prowler, you will need to specify the provider (e.g aws or azure):

|

||||

> If no provider specified, AWS will be used for backward compatibility with most of v2 options.

|

||||

|

||||

```console

|

||||

@@ -226,7 +226,6 @@ For executing specific checks or services you can use options `-c`/`checks` or `

|

||||

```console

|

||||

prowler azure --checks storage_blob_public_access_level_is_disabled

|

||||

prowler aws --services s3 ec2

|

||||

prowler gcp --services iam compute

|

||||

```

|

||||

|

||||

Also, checks and services can be excluded with options `-e`/`--excluded-checks` or `--excluded-services`:

|

||||

@@ -234,7 +233,6 @@ Also, checks and services can be excluded with options `-e`/`--excluded-checks`

|

||||

```console

|

||||

prowler aws --excluded-checks s3_bucket_public_access

|

||||

prowler azure --excluded-services defender iam

|

||||

prowler gcp --excluded-services kms

|

||||

```

|

||||

|

||||

More options and executions methods that will save your time in [Miscelaneous](tutorials/misc.md).

|

||||

@@ -254,8 +252,6 @@ prowler aws --profile custom-profile -f us-east-1 eu-south-2

|

||||

```

|

||||

> By default, `prowler` will scan all AWS regions.

|

||||

|

||||

See more details about AWS Authentication in [Requirements](getting-started/requirements.md)

|

||||

|

||||

### Azure

|

||||

|

||||

With Azure you need to specify which auth method is going to be used:

|

||||

@@ -274,28 +270,9 @@ prowler azure --browser-auth

|

||||

prowler azure --managed-identity-auth

|

||||

```

|

||||

|

||||

See more details about Azure Authentication in [Requirements](getting-started/requirements.md)

|

||||

More details in [Requirements](getting-started/requirements.md)

|

||||

|

||||

Prowler by default scans all the subscriptions that is allowed to scan, if you want to scan a single subscription or various concrete subscriptions you can use the following flag (using az cli auth as example):

|

||||

```console

|

||||

prowler azure --az-cli-auth --subscription-ids <subscription ID 1> <subscription ID 2> ... <subscription ID N>

|

||||

```

|

||||

|

||||

### Google Cloud

|

||||

|

||||

Prowler will use by default your User Account credentials, you can configure it using:

|

||||

|

||||

- `gcloud init` to use a new account

|

||||

- `gcloud config set account <account>` to use an existing account

|

||||

|

||||

Then, obtain your access credentials using: `gcloud auth application-default login`

|

||||

|

||||

Otherwise, you can generate and download Service Account keys in JSON format (refer to https://cloud.google.com/iam/docs/creating-managing-service-account-keys) and provide the location of the file with the following argument:

|

||||

|

||||

```console

|

||||

prowler gcp --credentials-file path

|

||||

```

|

||||

|

||||

> `prowler` will scan the GCP project associated with the credentials.

|

||||

|

||||

See more details about GCP Authentication in [Requirements](getting-started/requirements.md)

|

||||

|

||||

@@ -7,52 +7,35 @@ You can use `-w`/`--allowlist-file` with the path of your allowlist yaml file, b

|

||||

|

||||

## Allowlist Yaml File Syntax

|

||||

|

||||

### Account, Check and/or Region can be * to apply for all the cases.

|

||||

### Resources and tags are lists that can have either Regex or Keywords.

|

||||

### Tags is an optional list that matches on tuples of 'key=value' and are "ANDed" together.

|

||||

### Use an alternation Regex to match one of multiple tags with "ORed" logic.

|

||||

### Account, Check and/or Region can be * to apply for all the cases

|

||||

### Resources is a list that can have either Regex or Keywords:

|

||||

########################### ALLOWLIST EXAMPLE ###########################

|

||||

Allowlist:

|

||||

Accounts:

|

||||

"123456789012":

|

||||

Checks:

|

||||

Checks:

|

||||

"iam_user_hardware_mfa_enabled":

|

||||

Regions:

|

||||

Regions:

|

||||

- "us-east-1"

|

||||

Resources:

|

||||

Resources:

|

||||

- "user-1" # Will ignore user-1 in check iam_user_hardware_mfa_enabled

|

||||

- "user-2" # Will ignore user-2 in check iam_user_hardware_mfa_enabled

|

||||

"ec2_*":

|

||||

Regions:

|

||||

- "*"

|

||||

Resources:

|

||||

- "*" # Will ignore every EC2 check in every account and region

|

||||

"*":

|

||||

Regions:

|

||||

Regions:

|

||||

- "*"

|

||||

Resources:

|

||||

- "test"

|

||||

Tags:

|

||||

- "test=test" # Will ignore every resource containing the string "test" and the tags 'test=test' and

|

||||

- "project=test|project=stage" # either of ('project=test' OR project=stage) in account 123456789012 and every region

|

||||

Resources:

|

||||

- "test" # Will ignore every resource containing the string "test" in every account and region

|

||||

|

||||

"*":

|

||||

Checks:

|

||||

Checks:

|

||||

"s3_bucket_object_versioning":

|

||||

Regions:

|

||||

Regions:

|

||||

- "eu-west-1"

|

||||

- "us-east-1"

|

||||

Resources:

|

||||

Resources:

|

||||

- "ci-logs" # Will ignore bucket "ci-logs" AND ALSO bucket "ci-logs-replica" in specified check and regions

|

||||

- "logs" # Will ignore EVERY BUCKET containing the string "logs" in specified check and regions

|

||||

- "[[:alnum:]]+-logs" # Will ignore all buckets containing the terms ci-logs, qa-logs, etc. in specified check and regions

|

||||

"*":

|

||||

Regions:

|

||||

- "*"

|

||||

Resources:

|

||||

- "*"

|

||||

Tags:

|

||||

- "environment=dev" # Will ignore every resource containing the tag 'environment=dev' in every account and region

|

||||

|

||||

|

||||

## Supported Allowlist Locations

|

||||

@@ -87,7 +70,6 @@ prowler aws -w arn:aws:dynamodb:<region_name>:<account_id>:table/<table_name>

|

||||

- Checks (String): This field can contain either a Prowler Check Name or an `*` (which applies to all the scanned checks).

|

||||

- Regions (List): This field contains a list of regions where this allowlist rule is applied (it can also contains an `*` to apply all scanned regions).

|

||||

- Resources (List): This field contains a list of regex expressions that applies to the resources that are wanted to be allowlisted.

|

||||

- Tags (List): -Optional- This field contains a list of tuples in the form of 'key=value' that applies to the resources tags that are wanted to be allowlisted.

|

||||

|

||||

<img src="../img/allowlist-row.png"/>

|

||||

|

||||

@@ -119,7 +101,7 @@ generates an Allowlist:

|

||||

```

|

||||

def handler(event, context):

|

||||

checks = {}

|

||||

checks["vpc_flow_logs_enabled"] = { "Regions": [ "*" ], "Resources": [ "" ], Optional("Tags"): [ "key:value" ] }

|

||||

checks["vpc_flow_logs_enabled"] = { "Regions": [ "*" ], "Resources": [ "" ] }

|

||||

|

||||

al = { "Allowlist": { "Accounts": { "*": { "Checks": checks } } } }

|

||||

return al

|

||||

|

||||

@@ -13,7 +13,7 @@ Before sending findings to Prowler, you will need to perform next steps:

|

||||

- Using the AWS Management Console:

|

||||

|

||||

3. Allow Prowler to import its findings to AWS Security Hub by adding the policy below to the role or user running Prowler:

|

||||

- [prowler-security-hub.json](https://github.com/prowler-cloud/prowler/blob/master/permissions/prowler-security-hub.json)

|

||||

- [prowler-security-hub.json](https://github.com/prowler-cloud/prowler/blob/master/iam/prowler-security-hub.json)

|

||||

|

||||

Once it is enabled, it is as simple as running the command below (for all regions):

|

||||

|

||||

@@ -29,34 +29,14 @@ prowler -S -f eu-west-1

|

||||

|

||||

> **Note 1**: It is recommended to send only fails to Security Hub and that is possible adding `-q` to the command.

|

||||

|

||||

> **Note 2**: Since Prowler perform checks to all regions by default you may need to filter by region when runing Security Hub integration, as shown in the example above. Remember to enable Security Hub in the region or regions you need by calling `aws securityhub enable-security-hub --region <region>` and run Prowler with the option `-f <region>` (if no region is used it will try to push findings in all regions hubs). Prowler will send findings to the Security Hub on the region where the scanned resource is located.

|

||||

> **Note 2**: Since Prowler perform checks to all regions by defauls you may need to filter by region when runing Security Hub integration, as shown in the example above. Remember to enable Security Hub in the region or regions you need by calling `aws securityhub enable-security-hub --region <region>` and run Prowler with the option `-f <region>` (if no region is used it will try to push findings in all regions hubs).

|

||||

|

||||

> **Note 3**: To have updated findings in Security Hub you have to run Prowler periodically. Once a day or every certain amount of hours.

|

||||

> **Note 3** to have updated findings in Security Hub you have to run Prowler periodically. Once a day or every certain amount of hours.

|

||||

|

||||

Once you run findings for first time you will be able to see Prowler findings in Findings section:

|

||||

|

||||

|

||||

|

||||

## Send findings to Security Hub assuming an IAM Role

|

||||

|

||||

When you are auditing a multi-account AWS environment, you can send findings to a Security Hub of another account by assuming an IAM role from that account using the `-R` flag in the Prowler command:

|

||||

|

||||

```sh

|

||||

prowler -S -R arn:aws:iam::123456789012:role/ProwlerExecRole

|

||||

```

|

||||

|

||||

> Remember that the used role needs to have permissions to send findings to Security Hub. To get more information about the permissions required, please refer to the following IAM policy [prowler-security-hub.json](https://github.com/prowler-cloud/prowler/blob/master/permissions/prowler-security-hub.json)

|

||||

|

||||

|

||||

## Send only failed findings to Security Hub

|

||||

|

||||

When using Security Hub it is recommended to send only the failed findings generated. To follow that recommendation you could add the `-q` flag to the Prowler command:

|

||||

|

||||

```sh

|

||||

prowler -S -q

|

||||

```

|

||||

|

||||

|

||||

## Skip sending updates of findings to Security Hub

|

||||

|

||||

By default, Prowler archives all its findings in Security Hub that have not appeared in the last scan.

|

||||

|

||||

@@ -31,7 +31,7 @@ checks_v3_to_v2_mapping = {

|

||||

"awslambda_function_url_cors_policy": "extra7180",

|

||||

"awslambda_function_url_public": "extra7179",

|

||||

"awslambda_function_using_supported_runtimes": "extra762",

|

||||

"cloudformation_stack_outputs_find_secrets": "extra742",

|

||||

"cloudformation_outputs_find_secrets": "extra742",

|

||||

"cloudformation_stacks_termination_protection_enabled": "extra7154",

|

||||

"cloudfront_distributions_field_level_encryption_enabled": "extra767",

|

||||

"cloudfront_distributions_geo_restrictions_enabled": "extra732",

|

||||

@@ -113,6 +113,7 @@ checks_v3_to_v2_mapping = {

|

||||

"ec2_securitygroup_allow_wide_open_public_ipv4": "extra778",

|

||||

"ec2_securitygroup_default_restrict_traffic": "check43",

|

||||

"ec2_securitygroup_from_launch_wizard": "extra7173",

|

||||

"ec2_securitygroup_in_use_without_ingress_filtering": "extra74",

|

||||

"ec2_securitygroup_not_used": "extra75",

|

||||

"ec2_securitygroup_with_many_ingress_egress_rules": "extra777",

|

||||

"ecr_repositories_lifecycle_policy_enabled": "extra7194",

|

||||

@@ -137,6 +138,7 @@ checks_v3_to_v2_mapping = {

|

||||

"elbv2_internet_facing": "extra79",

|

||||

"elbv2_listeners_underneath": "extra7158",

|

||||

"elbv2_logging_enabled": "extra717",

|

||||

"elbv2_request_smugling": "extra7142",

|

||||

"elbv2_ssl_listeners": "extra793",

|

||||

"elbv2_waf_acl_attached": "extra7129",

|

||||

"emr_cluster_account_public_block_enabled": "extra7178",

|

||||

|

||||

@@ -81,4 +81,36 @@ Standard results will be shown and additionally the framework information as the

|

||||

|

||||

## Create and contribute adding other Security Frameworks

|

||||

|

||||

This information is part of the Developer Guide and can be found here: https://docs.prowler.cloud/en/latest/tutorials/developer-guide/.

|

||||

If you want to create or contribute with your own security frameworks or add public ones to Prowler you need to make sure the checks are available if not you have to create your own. Then create a compliance file per provider like in `prowler/compliance/aws/` and name it as `<framework>_<version>_<provider>.json` then follow the following format to create yours.

|

||||

|

||||

Each file version of a framework will have the following structure at high level with the case that each framework needs to be generally identified), one requirement can be also called one control but one requirement can be linked to multiple prowler checks.:

|

||||

|

||||

- `Framework`: string. Indistiguish name of the framework, like CIS

|

||||

- `Provider`: string. Provider where the framework applies, such as AWS, Azure, OCI,...

|

||||

- `Version`: string. Version of the framework itself, like 1.4 for CIS.

|

||||

- `Requirements`: array of objects. Include all requirements or controls with the mapping to Prowler.

|

||||

- `Requirements_Id`: string. Unique identifier per each requirement in the specific framework

|

||||

- `Requirements_Description`: string. Description as in the framework.

|

||||

- `Requirements_Attributes`: array of objects. Includes all needed attributes per each requirement, like levels, sections, etc. Whatever helps to create a dedicated report with the result of the findings. Attributes would be taken as closely as possible from the framework's own terminology directly.

|

||||

- `Requirements_Checks`: array. Prowler checks that are needed to prove this requirement. It can be one or multiple checks. In case of no automation possible this can be empty.

|

||||

|

||||

```

|

||||

{

|

||||

"Framework": "<framework>-<provider>",

|

||||

"Version": "<version>",

|

||||

"Requirements": [

|

||||

{

|

||||

"Id": "<unique-id>",

|

||||

"Description": "Requiemente full description",

|

||||

"Checks": [

|

||||

"Here is the prowler check or checks that is going to be executed"

|

||||

],

|

||||

"Attributes": [

|

||||

{

|

||||

<Add here your custom attributes.>

|

||||

}

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

Finally, to have a proper output file for your reports, your framework data model has to be created in `prowler/lib/outputs/models.py` and also the CLI table output in `prowler/lib/outputs/compliance.py`.

|

||||

|

||||

@@ -1,281 +0,0 @@

|

||||

# Developer Guide

|

||||

|

||||

You can extend Prowler in many different ways, in most cases you will want to create your own checks and compliance security frameworks, here is where you can learn about how to get started with it. We also include how to create custom outputs, integrations and more.

|

||||

|

||||

## Get the code and install all dependencies

|

||||

|

||||

First of all, you need a version of Python 3.9 or higher and also pip installed to be able to install all dependencies requred. Once that is satisfied go a head and clone the repo:

|

||||

|

||||

```

|

||||

git clone https://github.com/prowler-cloud/prowler

|

||||

cd prowler

|

||||

```

|

||||

For isolation and avoid conflicts with other environments, we recommend usage of `poetry`:

|

||||

```

|

||||

pip install poetry

|

||||

```

|

||||

Then install all dependencies including the ones for developers:

|

||||

```

|

||||

poetry install

|

||||

poetry shell

|

||||

```

|

||||

|

||||

## Contributing with your code or fixes to Prowler

|

||||

|

||||

This repo has git pre-commit hooks managed via the pre-commit tool. Install it how ever you like, then in the root of this repo run:

|

||||

```

|

||||

pre-commit install

|

||||

```

|

||||

You should get an output like the following:

|

||||

```

|

||||

pre-commit installed at .git/hooks/pre-commit

|

||||

```

|

||||

|

||||

Before we merge any of your pull requests we pass checks to the code, we use the following tools and automation to make sure the code is secure and dependencies up-to-dated (these should have been already installed if you ran `pipenv install -d`):

|

||||

|

||||

- `bandit` for code security review.

|

||||

- `safety` and `dependabot` for dependencies.

|

||||

- `hadolint` and `dockle` for our containers security.

|

||||

- `snyk` in Docker Hub.

|

||||

- `clair` in Amazon ECR.

|

||||

- `vulture`, `flake8`, `black` and `pylint` for formatting and best practices.

|

||||

|

||||

You can see all dependencies in file `Pipfile`.

|

||||

|

||||

## Create a new check for a Provider

|

||||

|

||||

### If the check you want to create belongs to an existing service

|

||||

|

||||

To create a new check, you will need to create a folder inside the specific service, i.e. `prowler/providers/<provider>/services/<service>/<check_name>/`, with the name of check following the pattern: `service_subservice_action`.

|

||||

Inside that folder, create the following files:

|

||||

|

||||

- An empty `__init__.py`: to make Python treat this check folder as a package.

|

||||

- A `check_name.py` containing the check's logic, for example:

|

||||

```

|

||||

# Import the Check_Report of the specific provider

|

||||

from prowler.lib.check.models import Check, Check_Report_AWS

|

||||

# Import the client of the specific service

|

||||

from prowler.providers.aws.services.ec2.ec2_client import ec2_client

|

||||

|

||||

# Create the class for the check

|

||||

class ec2_ebs_volume_encryption(Check):

|

||||

def execute(self):

|

||||

findings = []

|

||||

# Iterate the service's asset that want to be analyzed

|

||||

for volume in ec2_client.volumes:

|

||||

# Initialize a Check Report for each item and assign the region, resource_id, resource_arn and resource_tags

|

||||

report = Check_Report_AWS(self.metadata())

|

||||

report.region = volume.region

|

||||

report.resource_id = volume.id

|

||||

report.resource_arn = volume.arn

|

||||

report.resource_tags = volume.tags

|

||||

# Make the logic with conditions and create a PASS and a FAIL with a status and a status_extended

|

||||

if volume.encrypted:

|

||||

report.status = "PASS"

|

||||

report.status_extended = f"EBS Snapshot {volume.id} is encrypted."

|

||||

else:

|

||||

report.status = "FAIL"

|

||||

report.status_extended = f"EBS Snapshot {volume.id} is unencrypted."

|

||||

findings.append(report) # Append a report for each item

|

||||

|

||||

return findings

|

||||

```

|

||||

- A `check_name.metadata.json` containing the check's metadata, for example:

|

||||

```

|

||||

{

|

||||

"Provider": "aws",

|

||||

"CheckID": "ec2_ebs_volume_encryption",

|

||||

"CheckTitle": "Ensure there are no EBS Volumes unencrypted.",

|

||||

"CheckType": [

|

||||

"Data Protection"

|

||||

],

|

||||

"ServiceName": "ec2",

|

||||

"SubServiceName": "volume",

|

||||

"ResourceIdTemplate": "arn:partition:service:region:account-id:resource-id",

|

||||

"Severity": "medium",

|

||||

"ResourceType": "AwsEc2Volume",

|

||||

"Description": "Ensure there are no EBS Volumes unencrypted.",

|

||||

"Risk": "Data encryption at rest prevents data visibility in the event of its unauthorized access or theft.",

|

||||

"RelatedUrl": "",

|

||||

"Remediation": {

|

||||

"Code": {

|

||||

"CLI": "",

|

||||

"NativeIaC": "",

|

||||

"Other": "",

|

||||

"Terraform": ""

|

||||

},

|

||||

"Recommendation": {

|

||||

"Text": "Encrypt all EBS volumes and Enable Encryption by default You can configure your AWS account to enforce the encryption of the new EBS volumes and snapshot copies that you create. For example; Amazon EBS encrypts the EBS volumes created when you launch an instance and the snapshots that you copy from an unencrypted snapshot.",

|

||||

"Url": "https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/EBSEncryption.html"

|

||||

}

|

||||

},

|

||||

"Categories": [

|

||||

"encryption"

|

||||

],

|

||||

"DependsOn": [],

|

||||

"RelatedTo": [],

|

||||

"Notes": ""

|

||||

}

|

||||

```

|

||||

|

||||

### If the check you want to create belongs to a service not supported already by Prowler you will need to create a new service first

|

||||

|

||||

To create a new service, you will need to create a folder inside the specific provider, i.e. `prowler/providers/<provider>/services/<service>/`.

|

||||

Inside that folder, create the following files:

|

||||

|

||||

- An empty `__init__.py`: to make Python treat this service folder as a package.

|

||||

- A `<service>_service.py`, containing all the service's logic and API Calls:

|

||||

```

|

||||

# You must import the following libraries

|

||||

import threading

|

||||

from typing import Optional

|

||||

|

||||

from pydantic import BaseModel

|

||||

|

||||

from prowler.lib.logger import logger

|

||||

from prowler.lib.scan_filters.scan_filters import is_resource_filtered

|

||||

from prowler.providers.aws.aws_provider import generate_regional_clients

|

||||

|

||||

|

||||

# Create a class for the Service

|

||||

################## <Service>

|

||||

class <Service>:

|

||||

def __init__(self, audit_info):

|

||||

self.service = "<service>" # The name of the service boto3 client

|

||||

self.session = audit_info.audit_session

|

||||

self.audited_account = audit_info.audited_account

|

||||

self.audit_resources = audit_info.audit_resources

|

||||

self.regional_clients = generate_regional_clients(self.service, audit_info)

|

||||

self.<items> = [] # Create an empty list of the items to be gathered, e.g., instances

|

||||

self.__threading_call__(self.__describe_<items>__)

|

||||

self.__describe_<item>__() # Optionally you can create another function to retrieve more data about each item

|

||||

|

||||

def __get_session__(self):

|

||||

return self.session

|

||||

|

||||

def __threading_call__(self, call):

|

||||

threads = []

|

||||

for regional_client in self.regional_clients.values():

|

||||

threads.append(threading.Thread(target=call, args=(regional_client,)))

|

||||

for t in threads:

|

||||

t.start()

|

||||

for t in threads:

|

||||

t.join()

|

||||

|

||||

def __describe_<items>__(self, regional_client):

|

||||

"""Get ALL <Service> <Items>"""

|

||||

logger.info("<Service> - Describing <Items>...")

|

||||

try:

|

||||

describe_<items>_paginator = regional_client.get_paginator("describe_<items>") # Paginator to get every item

|

||||

for page in describe_<items>_paginator.paginate():

|

||||

for <item> in page["<Items>"]:

|

||||

if not self.audit_resources or (

|

||||

is_resource_filtered(<item>["<item_arn>"], self.audit_resources)

|

||||

):

|

||||

self.<items>.append(

|

||||

<Item>(

|

||||

arn=stack["<item_arn>"],

|

||||

name=stack["<item_name>"],

|

||||

tags=stack.get("Tags", []),

|

||||

region=regional_client.region,

|

||||

)

|

||||

)

|

||||

except Exception as error:

|

||||

logger.error(

|

||||

f"{regional_client.region} -- {error.__class__.__name__}[{error.__traceback__.tb_lineno}]: {error}"

|

||||

)

|

||||

|

||||

def __describe_<item>__(self):

|

||||

"""Get Details for a <Service> <Item>"""

|

||||

logger.info("<Service> - Describing <Item> to get specific details...")

|

||||

try:

|

||||

for <item> in self.<items>:

|

||||

<item>_details = self.regional_clients[<item>.region].describe_<item>(

|

||||

<Attribute>=<item>.name

|

||||

)

|

||||

# For example, check if item is Public

|

||||

<item>.public = <item>_details.get("Public", False)

|

||||

|

||||

except Exception as error:

|

||||

logger.error(

|

||||

f"{<item>.region} -- {error.__class__.__name__}[{error.__traceback__.tb_lineno}]: {error}"

|

||||

)

|

||||

|

||||

|

||||

class <Item>(BaseModel):

|

||||

"""<Item> holds a <Service> <Item>"""

|

||||

|

||||

arn: str

|

||||

"""<Items>[].Arn"""

|

||||

name: str

|

||||

"""<Items>[].Name"""

|

||||

public: bool

|

||||

"""<Items>[].Public"""

|

||||

tags: Optional[list] = []

|

||||

region: str

|

||||

|

||||

```

|

||||

- A `<service>_client_.py`, containing the initialization of the service's class we have just created so the service's checks can use them:

|

||||

```

|

||||

from prowler.providers.aws.lib.audit_info.audit_info import current_audit_info

|

||||

from prowler.providers.aws.services.<service>.<service>_service import <Service>

|

||||

|

||||

<service>_client = <Service>(current_audit_info)

|

||||

```

|

||||

|

||||

## Create a new security compliance framework

|

||||

|

||||

If you want to create or contribute with your own security frameworks or add public ones to Prowler you need to make sure the checks are available if not you have to create your own. Then create a compliance file per provider like in `prowler/compliance/aws/` and name it as `<framework>_<version>_<provider>.json` then follow the following format to create yours.

|

||||

|

||||

Each file version of a framework will have the following structure at high level with the case that each framework needs to be generally identified, one requirement can be also called one control but one requirement can be linked to multiple prowler checks.:

|

||||

|

||||

- `Framework`: string. Indistiguish name of the framework, like CIS

|

||||

- `Provider`: string. Provider where the framework applies, such as AWS, Azure, OCI,...

|

||||

- `Version`: string. Version of the framework itself, like 1.4 for CIS.

|

||||

- `Requirements`: array of objects. Include all requirements or controls with the mapping to Prowler.

|

||||

- `Requirements_Id`: string. Unique identifier per each requirement in the specific framework

|

||||

- `Requirements_Description`: string. Description as in the framework.

|

||||

- `Requirements_Attributes`: array of objects. Includes all needed attributes per each requirement, like levels, sections, etc. Whatever helps to create a dedicated report with the result of the findings. Attributes would be taken as closely as possible from the framework's own terminology directly.

|

||||

- `Requirements_Checks`: array. Prowler checks that are needed to prove this requirement. It can be one or multiple checks. In case of no automation possible this can be empty.

|

||||

|

||||

```

|

||||

{

|

||||

"Framework": "<framework>-<provider>",

|

||||

"Version": "<version>",

|

||||

"Requirements": [

|

||||

{

|

||||

"Id": "<unique-id>",

|

||||

"Description": "Requiemente full description",

|

||||

"Checks": [

|

||||

"Here is the prowler check or checks that is going to be executed"

|

||||

],

|

||||

"Attributes": [

|

||||

{

|

||||

<Add here your custom attributes.>

|

||||

}

|

||||

]

|

||||

},

|

||||

...

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

Finally, to have a proper output file for your reports, your framework data model has to be created in `prowler/lib/outputs/models.py` and also the CLI table output in `prowler/lib/outputs/compliance.py`.

|

||||

|

||||

|

||||

## Create a custom output format

|

||||

|

||||

## Create a new integration

|

||||

|

||||

## Contribute with documentation

|

||||

|

||||

We use `mkdocs` to build this Prowler documentation site so you can easely contribute back with new docs or improving them.

|

||||

|

||||

1. Install `mkdocs` with your favorite package manager.

|

||||

2. Inside the `prowler` repository folder run `mkdocs serve` and point your browser to `http://localhost:8000` and you will see live changes to your local copy of this documentation site.

|

||||

3. Make all needed changes to docs or add new documents. To do so just edit existing md files inside `prowler/docs` and if you are adding a new section or file please make sure you add it to `mkdocs.yaml` file in the root folder of the Prowler repo.

|

||||

4. Once you are done with changes, please send a pull request to us for review and merge. Thank you in advance!

|

||||

|

||||

## Want some swag as appreciation for your contribution?

|

||||

|

||||

If you are like us and you love swag, we are happy to thank you for your contribution with some laptop stickers or whatever other swag we may have at that time. Please, tell us more details and your pull request link in our [Slack workspace here](https://join.slack.com/t/prowler-workspace/shared_invite/zt-1hix76xsl-2uq222JIXrC7Q8It~9ZNog). You can also reach out to Toni de la Fuente on Twitter [here](https://twitter.com/ToniBlyx), his DMs are open.

|

||||

@@ -1,29 +0,0 @@

|

||||

# GCP authentication

|

||||

|

||||

Prowler will use by default your User Account credentials, you can configure it using:

|

||||

|

||||

- `gcloud init` to use a new account

|

||||

- `gcloud config set account <account>` to use an existing account

|

||||

|

||||

Then, obtain your access credentials using: `gcloud auth application-default login`

|

||||

|

||||

Otherwise, you can generate and download Service Account keys in JSON format (refer to https://cloud.google.com/iam/docs/creating-managing-service-account-keys) and provide the location of the file with the following argument:

|

||||

|

||||

```console

|

||||

prowler gcp --credentials-file path

|

||||

```

|

||||

|

||||

> `prowler` will scan the GCP project associated with the credentials.

|

||||

|

||||

|

||||

Prowler will follow the same credentials search as [Google authentication libraries](https://cloud.google.com/docs/authentication/application-default-credentials#search_order):

|

||||

|

||||

1. [GOOGLE_APPLICATION_CREDENTIALS environment variable](https://cloud.google.com/docs/authentication/application-default-credentials#GAC)

|

||||

2. [User credentials set up by using the Google Cloud CLI](https://cloud.google.com/docs/authentication/application-default-credentials#personal)

|

||||

3. [The attached service account, returned by the metadata server](https://cloud.google.com/docs/authentication/application-default-credentials#attached-sa)

|

||||

|

||||

Those credentials must be associated to a user or service account with proper permissions to do all checks. To make sure, add the following roles to the member associated with the credentials:

|

||||

|

||||

- Viewer

|

||||

- Security Reviewer

|

||||

- Stackdriver Account Viewer

|

||||

|

Before Width: | Height: | Size: 61 KiB |

|

Before Width: | Height: | Size: 67 KiB |

|

Before Width: | Height: | Size: 200 KiB |

|

Before Width: | Height: | Size: 456 KiB |

|

Before Width: | Height: | Size: 69 KiB |

@@ -1,36 +0,0 @@

|

||||

# Integrations

|

||||

|

||||

## Slack

|

||||

|

||||

Prowler can be integrated with [Slack](https://slack.com/) to send a summary of the execution having configured a Slack APP in your channel with the following command:

|

||||

|

||||

```sh

|

||||

prowler <provider> --slack

|

||||

```

|

||||

|

||||

|

||||

|

||||

> Slack integration needs SLACK_API_TOKEN and SLACK_CHANNEL_ID environment variables.

|

||||

### Configuration

|

||||

|

||||

To configure the Slack Integration, follow the next steps:

|

||||

|

||||

1. Create a Slack Application:

|

||||

- Go to [Slack API page](https://api.slack.com/tutorials/tracks/getting-a-token), scroll down to the *Create app* button and select your workspace:

|

||||

|

||||

|

||||

- Install the application in your selected workspaces:

|

||||

|

||||

|

||||

- Get the *Slack App OAuth Token* that Prowler needs to send the message:

|

||||

|

||||

|

||||

2. Optionally, create a Slack Channel (you can use an existing one)

|

||||

|

||||

3. Integrate the created Slack App to your Slack channel:

|

||||

- Click on the channel, go to the Integrations tab, and Add an App.

|

||||

|

||||

|

||||

4. Set the following environment variables that Prowler will read:

|

||||

- `SLACK_API_TOKEN`: the *Slack App OAuth Token* that was previously get.

|

||||

- `SLACK_CHANNEL_ID`: the name of your Slack Channel where Prowler will send the message.

|

||||

@@ -51,30 +51,15 @@ prowler <provider> -e/--excluded-checks ec2 rds

|

||||

```console

|

||||

prowler <provider> -C/--checks-file <checks_list>.json

|

||||

```

|

||||

## Custom Checks

|

||||

Prowler allows you to include your custom checks with the flag:

|

||||

|

||||

## Severities

|

||||

Each check of Prowler has a severity, there are options related with it:

|

||||

|

||||

- List the available checks in the provider:

|

||||

```console

|

||||

prowler <provider> -x/--checks-folder <custom_checks_folder>

|

||||

prowler <provider> --list-severities

|

||||

```

|

||||

> S3 URIs are also supported as folders for custom checks, e.g. s3://bucket/prefix/checks_folder/. Make sure that the used credentials have s3:GetObject permissions in the S3 path where the custom checks are located.

|

||||

|

||||

The custom checks folder must contain one subfolder per check, each subfolder must be named as the check and must contain:

|

||||

|

||||

- An empty `__init__.py`: to make Python treat this check folder as a package.

|

||||

- A `check_name.py` containing the check's logic.

|

||||

- A `check_name.metadata.json` containing the check's metadata.

|

||||

>The check name must start with the service name followed by an underscore (e.g., ec2_instance_public_ip).

|

||||

|

||||

To see more information about how to write checks see the [Developer Guide](../developer-guide/#create-a-new-check-for-a-provider).

|

||||

## Severities

|

||||

Each of Prowler's checks has a severity, which can be:

|

||||

- informational

|

||||

- low

|

||||

- medium

|

||||

- high

|

||||

- critical

|

||||

|

||||

To execute specific severity(s):

|

||||

- Execute specific severity(s):

|

||||

```console

|

||||

prowler <provider> --severity critical high

|

||||

```

|

||||

|

||||

@@ -33,8 +33,9 @@ Several checks analyse resources that are exposed to the Internet, these are:

|

||||

- ec2_instance_internet_facing_with_instance_profile

|

||||

- ec2_instance_public_ip

|

||||

- ec2_networkacl_allow_ingress_any_port

|

||||

- ec2_securitygroup_allow_wide_open_public_ipv4

|

||||

- ec2_securitygroup_allow_ingress_from_internet_to_any_port

|

||||

- ec2_securitygroup_allow_wide_open_public_ipv4

|

||||

- ec2_securitygroup_in_use_without_ingress_filtering

|

||||

- ecr_repositories_not_publicly_accessible

|

||||

- eks_control_plane_endpoint_access_restricted

|

||||

- eks_endpoints_not_publicly_accessible

|

||||

|

||||

@@ -14,6 +14,4 @@ prowler <provider> -i

|

||||

|

||||

- Also, it creates by default a CSV and JSON to see detailed information about the resources extracted.

|

||||

|

||||

|

||||

|

||||

> The inventorying process is done with `resourcegroupstaggingapi` calls (except for the IAM resources which are done with Boto3 API calls.)

|

||||

|

||||

|

||||

@@ -46,11 +46,9 @@ Prowler supports natively the following output formats:

|

||||

|

||||

Hereunder is the structure for each of the supported report formats by Prowler:

|

||||

|

||||

### HTML

|

||||

|

||||

### CSV

|

||||

| ASSESSMENT_START_TIME | FINDING_UNIQUE_ID | PROVIDER | PROFILE | ACCOUNT_ID | ACCOUNT_NAME | ACCOUNT_EMAIL | ACCOUNT_ARN | ACCOUNT_ORG | ACCOUNT_TAGS | REGION | CHECK_ID | CHECK_TITLE | CHECK_TYPE | STATUS | STATUS_EXTENDED | SERVICE_NAME | SUBSERVICE_NAME | SEVERITY | RESOURCE_ID | RESOURCE_ARN | RESOURCE_TYPE | RESOURCE_DETAILS | RESOURCE_TAGS | DESCRIPTION | COMPLIANCE | RISK | RELATED_URL | REMEDIATION_RECOMMENDATION_TEXT | REMEDIATION_RECOMMENDATION_URL | REMEDIATION_RECOMMENDATION_CODE_NATIVEIAC | REMEDIATION_RECOMMENDATION_CODE_TERRAFORM | REMEDIATION_RECOMMENDATION_CODE_CLI | REMEDIATION_RECOMMENDATION_CODE_OTHER | CATEGORIES | DEPENDS_ON | RELATED_TO | NOTES |

|

||||

| ------- | ----------- | ------ | -------- | ------------ | ----------- | ---------- | ---------- | --------------------- | -------------------------- | -------------- | ----------------- | ------------------------ | --------------- | ---------- | ----------------- | --------- | -------------- | ----------------- | ------------------ | --------------------- | -------------------- | ------------------- | ------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- |

|

||||

| ASSESSMENT_START_TIME | FINDING_UNIQUE_ID | PROVIDER | PROFILE | ACCOUNT_ID | ACCOUNT_NAME | ACCOUNT_EMAIL | ACCOUNT_ARN | ACCOUNT_ORG | ACCOUNT_TAGS | REGION | CHECK_ID | CHECK_TITLE | CHECK_TYPE | STATUS | STATUS_EXTENDED | SERVICE_NAME | SUBSERVICE_NAME | SEVERITY | RESOURCE_ID | RESOURCE_ARN | RESOURCE_TYPE | RESOURCE_DETAILS | RESOURCE_TAGS | DESCRIPTION | RISK | RELATED_URL | REMEDIATION_RECOMMENDATION_TEXT | REMEDIATION_RECOMMENDATION_URL | REMEDIATION_RECOMMENDATION_CODE_NATIVEIAC | REMEDIATION_RECOMMENDATION_CODE_TERRAFORM | REMEDIATION_RECOMMENDATION_CODE_CLI | REMEDIATION_RECOMMENDATION_CODE_OTHER | CATEGORIES | DEPENDS_ON | RELATED_TO | NOTES |

|

||||

| ------- | ----------- | ------ | -------- | ------------ | ----------- | ---------- | ---------- | --------------------- | -------------------------- | -------------- | ----------------- | ------------------------ | --------------- | ---------- | ----------------- | --------- | -------------- | ----------------- | ------------------ | --------------------- | -------------------- | ------------------- | ------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- | -------------------- |

|

||||

|

||||

### JSON

|

||||

|

||||

@@ -73,10 +71,6 @@ Hereunder is the structure for each of the supported report formats by Prowler:

|

||||

"Severity": "low",

|

||||

"ResourceId": "rds-instance-id",

|

||||

"ResourceArn": "",

|

||||

"ResourceTags": {

|

||||

"test": "test",

|

||||

"enironment": "dev"

|

||||

},

|

||||

"ResourceType": "AwsRdsDbInstance",

|

||||

"ResourceDetails": "",

|

||||

"Description": "Ensure RDS instances have minor version upgrade enabled.",

|

||||

@@ -95,15 +89,7 @@ Hereunder is the structure for each of the supported report formats by Prowler:

|

||||

}

|

||||

},

|

||||

"Categories": [],

|

||||

"Notes": "",

|

||||

"Compliance": {

|

||||

"CIS-1.4": [

|

||||

"1.20"

|

||||

],

|

||||

"CIS-1.5": [

|

||||

"1.20"

|

||||

]

|

||||

}

|

||||

"Notes": ""

|

||||

},{

|

||||

"AssessmentStartTime": "2022-12-01T14:16:57.354413",

|

||||

"FindingUniqueId": "",

|

||||

@@ -123,7 +109,7 @@ Hereunder is the structure for each of the supported report formats by Prowler:

|

||||

"ResourceId": "rds-instance-id",

|

||||

"ResourceArn": "",

|

||||

"ResourceType": "AwsRdsDbInstance",

|

||||

"ResourceTags": {},

|

||||

"ResourceDetails": "",

|

||||

"Description": "Ensure RDS instances have minor version upgrade enabled.",

|

||||

"Risk": "Auto Minor Version Upgrade is a feature that you can enable to have your database automatically upgraded when a new minor database engine version is available. Minor version upgrades often patch security vulnerabilities and fix bugs and therefore should be applied.",

|

||||

"RelatedUrl": "https://aws.amazon.com/blogs/database/best-practices-for-upgrading-amazon-rds-to-major-and-minor-versions-of-postgresql/",

|

||||

@@ -140,8 +126,7 @@ Hereunder is the structure for each of the supported report formats by Prowler:

|

||||

}

|

||||

},

|

||||

"Categories": [],

|

||||

"Notes": "",

|

||||

"Compliance: {}

|

||||

"Notes": ""

|

||||

}]

|

||||

```

|

||||

|

||||

@@ -181,30 +166,7 @@ Hereunder is the structure for each of the supported report formats by Prowler:

|

||||

],

|

||||

"Compliance": {

|

||||

"Status": "PASSED",

|

||||

"RelatedRequirements": [

|

||||

"CISA your-systems-2 booting-up-thing-to-do-first-3",

|

||||

"CIS-1.5 2.3.2",

|

||||

"AWS-Foundational-Security-Best-Practices rds",

|

||||

"RBI-Cyber-Security-Framework annex_i_6",

|

||||

"FFIEC d3-cc-pm-b-1 d3-cc-pm-b-3"

|

||||

],

|

||||

"AssociatedStandards": [

|

||||

{

|

||||

"StandardsId": "CISA"

|

||||

},

|

||||

{

|

||||

"StandardsId": "CIS-1.5"

|

||||

},

|

||||

{

|

||||

"StandardsId": "AWS-Foundational-Security-Best-Practices"

|

||||

},

|

||||

{

|

||||

"StandardsId": "RBI-Cyber-Security-Framework"

|

||||

},

|

||||

{

|

||||

"StandardsId": "FFIEC"

|

||||

}

|

||||

]

|

||||

"RelatedRequirements": []

|

||||

},

|

||||

"Remediation": {

|

||||

"Recommendation": {

|

||||

@@ -243,30 +205,7 @@ Hereunder is the structure for each of the supported report formats by Prowler:

|

||||

],

|

||||

"Compliance": {

|

||||

"Status": "PASSED",

|

||||

"RelatedRequirements": [

|

||||

"CISA your-systems-2 booting-up-thing-to-do-first-3",

|

||||

"CIS-1.5 2.3.2",

|

||||

"AWS-Foundational-Security-Best-Practices rds",

|

||||

"RBI-Cyber-Security-Framework annex_i_6",

|

||||

"FFIEC d3-cc-pm-b-1 d3-cc-pm-b-3"

|

||||

],

|

||||

"AssociatedStandards": [

|

||||

{

|

||||

"StandardsId": "CISA"

|

||||

},

|

||||

{

|

||||

"StandardsId": "CIS-1.5"

|

||||

},

|

||||

{

|

||||

"StandardsId": "AWS-Foundational-Security-Best-Practices"

|

||||

},

|

||||

{

|

||||

"StandardsId": "RBI-Cyber-Security-Framework"

|

||||

},

|

||||

{

|

||||

"StandardsId": "FFIEC"

|

||||

}

|

||||

]

|

||||

"RelatedRequirements": []

|

||||

},

|

||||

"Remediation": {

|

||||

"Recommendation": {

|

||||

|

||||

@@ -33,12 +33,10 @@ nav:

|

||||

- Reporting: tutorials/reporting.md

|

||||

- Compliance: tutorials/compliance.md

|

||||

- Quick Inventory: tutorials/quick-inventory.md

|

||||

- Integrations: tutorials/integrations.md

|

||||

- Configuration File: tutorials/configuration_file.md

|

||||

- Logging: tutorials/logging.md

|

||||

- Allowlist: tutorials/allowlist.md

|

||||

- Pentesting: tutorials/pentesting.md

|

||||

- Developer Guide: tutorials/developer-guide.md

|

||||

- AWS:

|

||||

- Assume Role: tutorials/aws/role-assumption.md

|

||||

- AWS Security Hub: tutorials/aws/securityhub.md

|

||||

@@ -52,9 +50,6 @@ nav:

|

||||

- Azure:

|

||||

- Authentication: tutorials/azure/authentication.md

|

||||

- Subscriptions: tutorials/azure/subscriptions.md

|

||||

- Google Cloud:

|

||||

- Authentication: tutorials/gcp/authentication.md

|

||||

- Developer Guide: tutorials/developer-guide.md

|

||||

- Security: security.md

|

||||

- Contact Us: contact.md

|

||||

- Troubleshooting: troubleshooting.md

|

||||

|

||||

@@ -6,31 +6,23 @@

|

||||

"account:Get*",

|

||||

"appstream:Describe*",

|

||||

"appstream:List*",

|

||||

"backup:List*",

|

||||

"cloudtrail:GetInsightSelectors",

|

||||

"codeartifact:List*",

|

||||

"codebuild:BatchGet*",

|

||||

"drs:Describe*",

|

||||

"ds:Get*",

|

||||

"ds:Describe*",

|

||||

"ds:Get*",

|

||||

"ds:List*",

|

||||

"ec2:GetEbsEncryptionByDefault",

|

||||

"ecr:Describe*",

|

||||

"ecr:GetRegistryScanningConfiguration",

|

||||

"elasticfilesystem:DescribeBackupPolicy",

|

||||

"glue:GetConnections",

|

||||

"glue:GetSecurityConfiguration*",

|

||||

"glue:SearchTables",

|

||||

"lambda:GetFunction*",

|

||||

"logs:FilterLogEvents",

|

||||

"macie2:GetMacieSession",

|

||||

"s3:GetAccountPublicAccessBlock",

|

||||

"shield:DescribeProtection",

|

||||

"shield:GetSubscriptionState",

|

||||

"securityhub:BatchImportFindings",

|

||||

"securityhub:GetFindings",

|

||||

"ssm:GetDocument",

|

||||

"ssm-incidents:List*",

|

||||

"support:Describe*",

|

||||

"tag:GetTagKeys"

|

||||

],

|

||||

@@ -44,8 +36,7 @@

|

||||

"apigateway:GET"

|

||||

],

|

||||

"Resource": [

|

||||

"arn:aws:apigateway:*::/restapis/*",

|

||||

"arn:aws:apigateway:*::/apis/*"

|

||||

"arn:aws:apigateway:*::/restapis/*"

|

||||

]

|

||||

}

|

||||

]

|

||||

|

||||

3296

poetry.lock

generated

@@ -1,7 +1,6 @@

|

||||