mirror of

https://github.com/prowler-cloud/prowler.git

synced 2026-05-16 09:12:47 +00:00

Compare commits

217 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| 6dee12450e | |||

| eecb1dd8c3 | |||

| 74add0c151 | |||

| 1cf86350bc | |||

| e9b09790da | |||

| c74b4adf27 | |||

| a769bb86d3 | |||

| f8a2527429 | |||

| ae645718ad | |||

| a0625dff2f | |||

| 37e9cbbabd | |||

| 8818f47333 | |||

| 3cffe72273 | |||

| 135aaca851 | |||

| cf8df051de | |||

| bef42f3f2d | |||

| 3d86bf1705 | |||

| 5a43ec951a | |||

| b0e6ab6e31 | |||

| b7fb38cc9e | |||

| f29f7fc239 | |||

| 2997ff0f1c | |||

| 11dc0aa5b2 | |||

| 8bddb9b265 | |||

| 689e292585 | |||

| bff2aabda6 | |||

| 4b29293362 | |||

| 4e24103dc6 | |||

| 3b90347849 | |||

| 6a7a037cec | |||

| 927c13b9c6 | |||

| 11cc8e998b | |||

| 4a71739c56 | |||

| aedc5cd0ad | |||

| 3d81307e56 | |||

| 918661bd7a | |||

| 99f9abe3f6 | |||

| f2950764f0 | |||

| d9777a68c7 | |||

| 2a4cc9a5f8 | |||

| 1f0c210926 | |||

| dd64c7d226 | |||

| 865f79f5b3 | |||

| 1f8a4c1022 | |||

| 1e422f20aa | |||

| 29eda28bf3 | |||

| f67f0cc66d | |||

| 721cafa0cd | |||

| c1d60054e9 | |||

| b95b3f68d3 | |||

| 81b6e27eb8 | |||

| d69678424b | |||

| a43c1aceec | |||

| f70cf8d81e | |||

| 83b6c79203 | |||

| 1192c038b2 | |||

| 4ebbf6553e | |||

| c501d63382 | |||

| 72d6d3f535 | |||

| ddd34dc9cc | |||

| 03b1c10d13 | |||

| 4cd5b8fd04 | |||

| f0ce17182b | |||

| 2a8a7d844b | |||

| ff33f426e5 | |||

| f691046c1f | |||

| 9fad8735b8 | |||

| c632055517 | |||

| fd850790d5 | |||

| 912d5d7f8c | |||

| d88a136ac3 | |||

| 172484cf08 | |||

| 821083639a | |||

| e4f0f3ec87 | |||

| cc6302f7b8 | |||

| c89fd82856 | |||

| 0e29a92d42 | |||

| 835d8ffe5d | |||

| 21ee2068a6 | |||

| 0ad149942b | |||

| 66305768c0 | |||

| 05f98fe993 | |||

| 89416f37af | |||

| 7285ddcb4e | |||

| 8993a4f707 | |||

| 633d7bd8a8 | |||

| 3944ea2055 | |||

| d85d0f5877 | |||

| d32a7986a5 | |||

| 71813425bd | |||

| da000b54ca | |||

| 74a9b42d9f | |||

| f9322ab3aa | |||

| 5becaca2c4 | |||

| 50a670fbc4 | |||

| 48f405a696 | |||

| bc56c4242e | |||

| 1b63256b9c | |||

| 7930b449b3 | |||

| e5cd42da55 | |||

| 2a54bbf901 | |||

| 2e134ed947 | |||

| ba727391db | |||

| d4346149fa | |||

| 2637fc5132 | |||

| ac5135470b | |||

| 613966aecf | |||

| 83ddcb9c39 | |||

| 957c2433cf | |||

| c10b367070 | |||

| 432416d09e | |||

| dd7d25dc10 | |||

| 24c60a0ef6 | |||

| f616c17bd2 | |||

| 5628200bd4 | |||

| ae93527a6f | |||

| 2939d5cadd | |||

| e2c7bc2d6d | |||

| f4bae78730 | |||

| d307898289 | |||

| 879ac3ccb1 | |||

| cd41e73cbe | |||

| 47f1ca646e | |||

| a18b18e530 | |||

| 4d1ffbb652 | |||

| 13423b137e | |||

| d60eea5e2f | |||

| 39c7d3b69f | |||

| 2de04f1374 | |||

| 5fb39ea316 | |||

| 55640ecad2 | |||

| 69d3867895 | |||

| 210f44f66f | |||

| b78e4ad6a1 | |||

| 4146566f92 | |||

| 4e46dfb068 | |||

| 13c96a80db | |||

| de77a33341 | |||

| 295bb74acf | |||

| 59abd2bd5b | |||

| ecbfbfb960 | |||

| 04e5804665 | |||

| 681d0d9538 | |||

| 8bfd9c0e62 | |||

| 95df9bc316 | |||

| d08576f672 | |||

| aa16bf4084 | |||

| 432632d981 | |||

| d6ade7694e | |||

| c9e282f236 | |||

| 5b902a1329 | |||

| fc7c932169 | |||

| 819b52687c | |||

| 28fff104a1 | |||

| 07b2b0de5a | |||

| 4287b7ac61 | |||

| 734331d5bc | |||

| 5de2bf7a83 | |||

| 1744921a0a | |||

| d4da64582c | |||

| d94acfeb17 | |||

| fcc14012da | |||

| cc8cbc89fd | |||

| 8582e40edf | |||

| 1e87ef12ee | |||

| 565200529f | |||

| 198c7f48ca | |||

| 8105e63b79 | |||

| 3932296fcf | |||

| cb0d9d3392 | |||

| 4b90eca21e | |||

| 365b396f9a | |||

| c526c61d5e | |||

| c4aff56f23 | |||

| d9e0ed1cc9 | |||

| e77cd6b2b2 | |||

| f04b174e67 | |||

| 0c1c641765 | |||

| d44f6bf20f | |||

| 1fa62cf417 | |||

| d8d2ddd9e7 | |||

| f3ff8369c3 | |||

| 99d1868827 | |||

| 31cefa5b3c | |||

| 2d5ac8238b | |||

| 248cc9d68b | |||

| 5f0a5b57f9 | |||

| 86367fca3f | |||

| 07be3c21bf | |||

| 3097ba6c66 | |||

| b4669a2a72 | |||

| e8848ca261 | |||

| 5c6902b459 | |||

| 9b772a70a1 | |||

| 6c12a3e1e0 | |||

| c6f0351e9c | |||

| 7e90389dab | |||

| 30ce25300f | |||

| 26caf51619 | |||

| 3ecb5dbce6 | |||

| 1d409d04f2 | |||

| 679414418e | |||

| b26370d508 | |||

| 72b30aa45f | |||

| d9561d5d22 | |||

| 3d0ab4684f | |||

| 29a071c98e | |||

| 0ac7064d80 | |||

| dcd55dbb8f | |||

| 441dc11963 | |||

| 21a8193510 | |||

| 3b9a3ff6be | |||

| c5f12f0a6c | |||

| 90565099bd | |||

| 2b2814723f | |||

| 42e54c42cf | |||

| f0c12bbf93 |

@@ -1,4 +1,16 @@

|

||||

# Ignore git files

|

||||

.git/

|

||||

.github/

|

||||

|

||||

# Ignore Dodckerfile

|

||||

Dockerfile

|

||||

|

||||

# Ignore hidden files

|

||||

.pre-commit-config.yaml

|

||||

.dockerignore

|

||||

.gitignore

|

||||

.pytest*

|

||||

.DS_Store

|

||||

|

||||

# Ignore output directories

|

||||

output/

|

||||

|

||||

@@ -0,0 +1 @@

|

||||

* @prowler-cloud/prowler-team

|

||||

@@ -0,0 +1,50 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a report to help us improve

|

||||

title: "[Bug]: "

|

||||

labels: bug, status/needs-triage

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

<!--

|

||||

Please use this template to create your bug report. By providing as much info as possible you help us understand the issue, reproduce it and resolve it for you quicker. Therefore, take a couple of extra minutes to make sure you have provided all info needed.

|

||||

|

||||

PROTIP: record your screen and attach it as a gif to showcase the issue.

|

||||

|

||||

- How to record and attach gif: https://bit.ly/2Mi8T6K

|

||||

-->

|

||||

|

||||

**What happened?**

|

||||

A clear and concise description of what the bug is or what is not working as expected

|

||||

|

||||

|

||||

**How to reproduce it**

|

||||

Steps to reproduce the behavior:

|

||||

1. What command are you running?

|

||||

2. Environment you have, like single account, multi-account, organizations, etc.

|

||||

3. See error

|

||||

|

||||

|

||||

**Expected behavior**

|

||||

A clear and concise description of what you expected to happen.

|

||||

|

||||

|

||||

**Screenshots or Logs**

|

||||

If applicable, add screenshots to help explain your problem.

|

||||

Also, you can add logs (anonymize them first!). Here a command that may help to share a log

|

||||

`bash -x ./prowler -options > debug.log 2>&1` then attach here `debug.log`

|

||||

|

||||

|

||||

**From where are you running Prowler?**

|

||||

Please, complete the following information:

|

||||

- Resource: [e.g. EC2 instance, Fargate task, Docker container manually, EKS, Cloud9, CodeBuild, workstation, etc.)

|

||||

- OS: [e.g. Amazon Linux 2, Mac, Alpine, Windows, etc. ]

|

||||

- AWS-CLI Version [`aws --version`]:

|

||||

- Prowler Version [`./prowler -V`]:

|

||||

- Shell and version:

|

||||

- Others:

|

||||

|

||||

|

||||

**Additional context**

|

||||

Add any other context about the problem here.

|

||||

@@ -0,0 +1,5 @@

|

||||

blank_issues_enabled: false

|

||||

contact_links:

|

||||

- name: Questions & Help

|

||||

url: https://github.com/prowler-cloud/prowler/discussions/categories/q-a

|

||||

about: Please ask and answer questions here.

|

||||

@@ -0,0 +1,20 @@

|

||||

---

|

||||

name: Feature request

|

||||

about: Suggest an idea for this project

|

||||

title: ''

|

||||

labels: enhancement, status/needs-triage

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

**Is your feature request related to a problem? Please describe.**

|

||||

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

|

||||

|

||||

**Describe the solution you'd like**

|

||||

A clear and concise description of what you want to happen.

|

||||

|

||||

**Describe alternatives you've considered**

|

||||

A clear and concise description of any alternative solutions or features you've considered.

|

||||

|

||||

**Additional context**

|

||||

Add any other context or screenshots about the feature request here.

|

||||

@@ -1 +1,13 @@

|

||||

### Context

|

||||

|

||||

Please include relevant motivation and context for this PR.

|

||||

|

||||

|

||||

### Description

|

||||

|

||||

Please include a summary of the change and which issue is fixed. List any dependencies that are required for this change.

|

||||

|

||||

|

||||

### License

|

||||

|

||||

By submitting this pull request, I confirm that my contribution is made under the terms of the Apache 2.0 license.

|

||||

|

||||

@@ -0,0 +1,209 @@

|

||||

name: build-lint-push-containers

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- 'master'

|

||||

paths-ignore:

|

||||

- '.github/**'

|

||||

- 'README.md'

|

||||

|

||||

release:

|

||||

types: [published, edited]

|

||||

|

||||

env:

|

||||

AWS_REGION_STG: eu-west-1

|

||||

AWS_REGION_PLATFORM: eu-west-1

|

||||

AWS_REGION_PRO: us-east-1

|

||||

IMAGE_NAME: prowler

|

||||

LATEST_TAG: latest

|

||||

STABLE_TAG: stable

|

||||

TEMPORARY_TAG: temporary

|

||||

DOCKERFILE_PATH: ./Dockerfile

|

||||

|

||||

jobs:

|

||||

# Lint Dockerfile using Hadolint

|

||||

# dockerfile-linter:

|

||||

# runs-on: ubuntu-latest

|

||||

# steps:

|

||||

# -

|

||||

# name: Checkout

|

||||

# uses: actions/checkout@v3

|

||||

# -

|

||||

# name: Install Hadolint

|

||||

# run: |

|

||||

# VERSION=$(curl --silent "https://api.github.com/repos/hadolint/hadolint/releases/latest" | \

|

||||

# grep '"tag_name":' | \

|

||||

# sed -E 's/.*"v([^"]+)".*/\1/' \

|

||||

# ) && curl -L -o /tmp/hadolint https://github.com/hadolint/hadolint/releases/download/v${VERSION}/hadolint-Linux-x86_64 \

|

||||

# && chmod +x /tmp/hadolint

|

||||

# -

|

||||

# name: Run Hadolint

|

||||

# run: |

|

||||

# /tmp/hadolint util/Dockerfile

|

||||

|

||||

# Build Prowler OSS container

|

||||

container-build:

|

||||

# needs: dockerfile-linter

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

-

|

||||

name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

-

|

||||

name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

-

|

||||

name: Build

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

# Without pushing to registries

|

||||

push: false

|

||||

tags: ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }}

|

||||

file: ${{ env.DOCKERFILE_PATH }}

|

||||

outputs: type=docker,dest=/tmp/${{ env.IMAGE_NAME }}.tar

|

||||

-

|

||||

name: Share image between jobs

|

||||

uses: actions/upload-artifact@v2

|

||||

with:

|

||||

name: ${{ env.IMAGE_NAME }}.tar

|

||||

path: /tmp/${{ env.IMAGE_NAME }}.tar

|

||||

|

||||

# Lint Prowler OSS container using Dockle

|

||||

# container-linter:

|

||||

# needs: container-build

|

||||

# runs-on: ubuntu-latest

|

||||

# steps:

|

||||

# -

|

||||

# name: Get container image from shared

|

||||

# uses: actions/download-artifact@v2

|

||||

# with:

|

||||

# name: ${{ env.IMAGE_NAME }}.tar

|

||||

# path: /tmp

|

||||

# -

|

||||

# name: Load Docker image

|

||||

# run: |

|

||||

# docker load --input /tmp/${{ env.IMAGE_NAME }}.tar

|

||||

# docker image ls -a

|

||||

# -

|

||||

# name: Install Dockle

|

||||

# run: |

|

||||

# VERSION=$(curl --silent "https://api.github.com/repos/goodwithtech/dockle/releases/latest" | \

|

||||

# grep '"tag_name":' | \

|

||||

# sed -E 's/.*"v([^"]+)".*/\1/' \

|

||||

# ) && curl -L -o dockle.deb https://github.com/goodwithtech/dockle/releases/download/v${VERSION}/dockle_${VERSION}_Linux-64bit.deb \

|

||||

# && sudo dpkg -i dockle.deb && rm dockle.deb

|

||||

# -

|

||||

# name: Run Dockle

|

||||

# run: dockle ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }}

|

||||

|

||||

# Push Prowler OSS container to registries

|

||||

container-push:

|

||||

# needs: container-linter

|

||||

needs: container-build

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

id-token: write

|

||||

contents: read # This is required for actions/checkout

|

||||

steps:

|

||||

-

|

||||

name: Get container image from shared

|

||||

uses: actions/download-artifact@v2

|

||||

with:

|

||||

name: ${{ env.IMAGE_NAME }}.tar

|

||||

path: /tmp

|

||||

-

|

||||

name: Load Docker image

|

||||

run: |

|

||||

docker load --input /tmp/${{ env.IMAGE_NAME }}.tar

|

||||

docker image ls -a

|

||||

-

|

||||

name: Login to DockerHub

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

||||

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

||||

-

|

||||

name: Login to Public ECR

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: public.ecr.aws

|

||||

username: ${{ secrets.PUBLIC_ECR_AWS_ACCESS_KEY_ID }}

|

||||

password: ${{ secrets.PUBLIC_ECR_AWS_SECRET_ACCESS_KEY }}

|

||||

env:

|

||||

AWS_REGION: ${{ env.AWS_REGION_PRO }}

|

||||

-

|

||||

name: Configure AWS Credentials -- STG

|

||||

if: github.event_name == 'push'

|

||||

uses: aws-actions/configure-aws-credentials@v1

|

||||

with:

|

||||

aws-region: ${{ env.AWS_REGION_STG }}

|

||||

role-to-assume: ${{ secrets.STG_IAM_ROLE_ARN }}

|

||||

role-session-name: build-lint-containers-stg

|

||||

-

|

||||

name: Login to ECR -- STG

|

||||

if: github.event_name == 'push'

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ${{ secrets.STG_ECR }}

|

||||

-

|

||||

name: Configure AWS Credentials -- PLATFORM

|

||||

if: github.event_name == 'release'

|

||||

uses: aws-actions/configure-aws-credentials@v1

|

||||

with:

|

||||

aws-region: ${{ env.AWS_REGION_PLATFORM }}

|

||||

role-to-assume: ${{ secrets.STG_IAM_ROLE_ARN }}

|

||||

role-session-name: build-lint-containers-pro

|

||||

-

|

||||

name: Login to ECR -- PLATFORM

|

||||

if: github.event_name == 'release'

|

||||

uses: docker/login-action@v2

|

||||

with:

|

||||

registry: ${{ secrets.PLATFORM_ECR }}

|

||||

-

|

||||

# Push to master branch - push "latest" tag

|

||||

name: Tag (latest)

|

||||

if: github.event_name == 'push'

|

||||

run: |

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.PLATFORM_ECR }}/${{ secrets.PLATFORM_ECR_REPOSITORY }}:${{ env.LATEST_TAG }}

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.DOCKER_HUB_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.LATEST_TAG }}

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.PUBLIC_ECR_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.LATEST_TAG }}

|

||||

-

|

||||

# Push to master branch - push "latest" tag

|

||||

name: Push (latest)

|

||||

if: github.event_name == 'push'

|

||||

run: |

|

||||

docker push ${{ secrets.PLATFORM_ECR }}/${{ secrets.PLATFORM_ECR_REPOSITORY }}:${{ env.LATEST_TAG }}

|

||||

docker push ${{ secrets.DOCKER_HUB_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.LATEST_TAG }}

|

||||

docker push ${{ secrets.PUBLIC_ECR_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.LATEST_TAG }}

|

||||

-

|

||||

# Tag the new release (stable and release tag)

|

||||

name: Tag (release)

|

||||

if: github.event_name == 'release'

|

||||

run: |

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.PLATFORM_ECR }}/${{ secrets.PLATFORM_ECR_REPOSITORY }}:${{ github.event.release.tag_name }}

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.DOCKER_HUB_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ github.event.release.tag_name }}

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.PUBLIC_ECR_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ github.event.release.tag_name }}

|

||||

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.PLATFORM_ECR }}/${{ secrets.PLATFORM_ECR_REPOSITORY }}:${{ env.STABLE_TAG }}

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.DOCKER_HUB_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.STABLE_TAG }}

|

||||

docker tag ${{ env.IMAGE_NAME }}:${{ env.TEMPORARY_TAG }} ${{ secrets.PUBLIC_ECR_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.STABLE_TAG }}

|

||||

|

||||

-

|

||||

# Push the new release (stable and release tag)

|

||||

name: Push (release)

|

||||

if: github.event_name == 'release'

|

||||

run: |

|

||||

docker push ${{ secrets.PLATFORM_ECR }}/${{ secrets.PLATFORM_ECR_REPOSITORY }}:${{ github.event.release.tag_name }}

|

||||

docker push ${{ secrets.DOCKER_HUB_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ github.event.release.tag_name }}

|

||||

docker push ${{ secrets.PUBLIC_ECR_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ github.event.release.tag_name }}

|

||||

|

||||

docker push ${{ secrets.PLATFORM_ECR }}/${{ secrets.PLATFORM_ECR_REPOSITORY }}:${{ env.STABLE_TAG }}

|

||||

docker push ${{ secrets.DOCKER_HUB_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.STABLE_TAG }}

|

||||

docker push ${{ secrets.PUBLIC_ECR_REPOSITORY }}/${{ env.IMAGE_NAME }}:${{ env.STABLE_TAG }}

|

||||

-

|

||||

name: Delete artifacts

|

||||

if: always()

|

||||

uses: geekyeggo/delete-artifact@v1

|

||||

with:

|

||||

name: ${{ env.IMAGE_NAME }}.tar

|

||||

@@ -0,0 +1,18 @@

|

||||

name: find-secrets

|

||||

|

||||

on: pull_request

|

||||

|

||||

jobs:

|

||||

trufflehog:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 0

|

||||

- name: TruffleHog OSS

|

||||

uses: trufflesecurity/trufflehog@v3.13.0

|

||||

with:

|

||||

path: ./

|

||||

base: ${{ github.event.repository.default_branch }}

|

||||

head: HEAD

|

||||

@@ -0,0 +1,41 @@

|

||||

name: Lint & Test

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- 'prowler-3.0-dev'

|

||||

pull_request:

|

||||

branches:

|

||||

- 'prowler-3.0-dev'

|

||||

|

||||

jobs:

|

||||

build:

|

||||

|

||||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: ["3.9"]

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install pipenv

|

||||

pipenv install

|

||||

- name: Bandit

|

||||

run: |

|

||||

pipenv run bandit -q -lll -x '*_test.py,./contrib/' -r .

|

||||

- name: Safety

|

||||

run: |

|

||||

pipenv run safety check

|

||||

- name: Vulture

|

||||

run: |

|

||||

pipenv run vulture --exclude "contrib" --min-confidence 100 .

|

||||

- name: Test with pytest

|

||||

run: |

|

||||

pipenv run pytest -n auto

|

||||

@@ -0,0 +1,50 @@

|

||||

# This is a basic workflow to help you get started with Actions

|

||||

|

||||

name: Refresh regions of AWS services

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: "0 9 * * *" #runs at 09:00 UTC everyday

|

||||

|

||||

env:

|

||||

GITHUB_BRANCH: "prowler-3.0-dev"

|

||||

|

||||

# A workflow run is made up of one or more jobs that can run sequentially or in parallel

|

||||

jobs:

|

||||

# This workflow contains a single job called "build"

|

||||

build:

|

||||

# The type of runner that the job will run on

|

||||

runs-on: ubuntu-latest

|

||||

# Steps represent a sequence of tasks that will be executed as part of the job

|

||||

steps:

|

||||

# Checks-out your repository under $GITHUB_WORKSPACE, so your job can access it

|

||||

- uses: actions/checkout@v3

|

||||

with:

|

||||

ref: ${{ env.GITHUB_BRANCH }}

|

||||

|

||||

- name: setup python

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: 3.9 #install the python needed

|

||||

|

||||

# Runs a single command using the runners shell

|

||||

- name: Run a one-line script

|

||||

run: python3 util/update_aws_services_regions.py

|

||||

|

||||

# Create pull request

|

||||

- name: Create Pull Request

|

||||

uses: peter-evans/create-pull-request@v4

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

commit-message: "feat(regions_update): Update regions for AWS services."

|

||||

branch: "aws-services-regions-updated"

|

||||

labels: "status/waiting-for-revision, severity/low"

|

||||

title: "feat(regions_update): Changes in regions for AWS services."

|

||||

body: |

|

||||

### Description

|

||||

|

||||

This PR updates the regions for AWS services.

|

||||

|

||||

### License

|

||||

|

||||

By submitting this pull request, I confirm that my contribution is made under the terms of the Apache 2.0 license.

|

||||

@@ -0,0 +1,29 @@

|

||||

repos:

|

||||

- repo: https://github.com/pre-commit/pre-commit-hooks

|

||||

rev: v3.3.0

|

||||

hooks:

|

||||

- id: check-merge-conflict

|

||||

- id: check-yaml

|

||||

args: ['--unsafe']

|

||||

- id: check-json

|

||||

- id: end-of-file-fixer

|

||||

- id: trailing-whitespace

|

||||

exclude: 'README.md'

|

||||

- id: no-commit-to-branch

|

||||

- id: pretty-format-json

|

||||

args: ['--autofix']

|

||||

|

||||

- repo: https://github.com/koalaman/shellcheck-precommit

|

||||

rev: v0.8.0

|

||||

hooks:

|

||||

- id: shellcheck

|

||||

|

||||

- repo: https://github.com/hadolint/hadolint

|

||||

rev: v2.10.0

|

||||

hooks:

|

||||

- id: hadolint

|

||||

name: Lint Dockerfiles

|

||||

description: Runs hadolint to lint Dockerfiles

|

||||

language: system

|

||||

types: ["dockerfile"]

|

||||

entry: hadolint

|

||||

+64

@@ -0,0 +1,64 @@

|

||||

# Build command

|

||||

# docker build --platform=linux/amd64 --no-cache -t prowler:latest -f ./Dockerfile .

|

||||

|

||||

# hadolint ignore=DL3007

|

||||

FROM public.ecr.aws/amazonlinux/amazonlinux:latest

|

||||

|

||||

LABEL maintainer="https://github.com/prowler-cloud/prowler"

|

||||

|

||||

ARG USERNAME=prowler

|

||||

ARG USERID=34000

|

||||

|

||||

# Prepare image as root

|

||||

USER 0

|

||||

# System dependencies

|

||||

# hadolint ignore=DL3006,DL3013,DL3033

|

||||

RUN yum upgrade -y && \

|

||||

yum install -y python3 bash curl jq coreutils py3-pip which unzip shadow-utils && \

|

||||

yum clean all && \

|

||||

rm -rf /var/cache/yum

|

||||

|

||||

RUN amazon-linux-extras install -y epel postgresql14 && \

|

||||

yum clean all && \

|

||||

rm -rf /var/cache/yum

|

||||

|

||||

# Create non-root user

|

||||

RUN useradd -l -s /bin/bash -U -u ${USERID} ${USERNAME}

|

||||

|

||||

USER ${USERNAME}

|

||||

|

||||

# Python dependencies

|

||||

# hadolint ignore=DL3006,DL3013,DL3042

|

||||

RUN pip3 install --upgrade pip && \

|

||||

pip3 install --no-cache-dir boto3 detect-secrets==1.0.3 && \

|

||||

pip3 cache purge

|

||||

# Set Python PATH

|

||||

ENV PATH="/home/${USERNAME}/.local/bin:${PATH}"

|

||||

|

||||

USER 0

|

||||

|

||||

# Install AWS CLI

|

||||

RUN curl https://awscli.amazonaws.com/awscli-exe-linux-x86_64.zip -o awscliv2.zip && \

|

||||

unzip -q awscliv2.zip && \

|

||||

aws/install && \

|

||||

rm -rf aws awscliv2.zip

|

||||

|

||||

# Keep Python2 for yum

|

||||

RUN sed -i '1 s/python/python2.7/' /usr/bin/yum

|

||||

|

||||

# Set Python3

|

||||

RUN rm /usr/bin/python && \

|

||||

ln -s /usr/bin/python3 /usr/bin/python

|

||||

|

||||

# Set working directory

|

||||

WORKDIR /prowler

|

||||

|

||||

# Copy all files

|

||||

COPY . ./

|

||||

|

||||

# Set files ownership

|

||||

RUN chown -R prowler .

|

||||

|

||||

USER ${USERNAME}

|

||||

|

||||

ENTRYPOINT ["./prowler"]

|

||||

@@ -1,17 +1,38 @@

|

||||

<p align="center">

|

||||

<img align="center" src="docs/images/prowler-pro-dark.png#gh-dark-mode-only" width="150" height="36">

|

||||

<img align="center" src="docs/images/prowler-pro-light.png#gh-light-mode-only" width="15%" height="15%">

|

||||

</p>

|

||||

<p align="center">

|

||||

<b><i>    See all the things you and your team can do with ProwlerPro at <a href="https://prowler.pro">prowler.pro</a></i></b>

|

||||

</p>

|

||||

<hr>

|

||||

<p align="center">

|

||||

<img src="https://user-images.githubusercontent.com/3985464/113734260-7ba06900-96fb-11eb-82bc-d4f68a1e2710.png" />

|

||||

</p>

|

||||

<p align="center">

|

||||

<a href="https://join.slack.com/t/prowler-workspace/shared_invite/zt-1hix76xsl-2uq222JIXrC7Q8It~9ZNog"><img alt="Slack Shield" src="https://img.shields.io/badge/slack-prowler-brightgreen.svg?logo=slack"></a>

|

||||

<a href="https://hub.docker.com/r/toniblyx/prowler"><img alt="Docker Pulls" src="https://img.shields.io/docker/pulls/toniblyx/prowler"></a>

|

||||

<a href="https://hub.docker.com/r/toniblyx/prowler"><img alt="Docker" src="https://img.shields.io/docker/cloud/build/toniblyx/prowler"></a>

|

||||

<a href="https://hub.docker.com/r/toniblyx/prowler"><img alt="Docker" src="https://img.shields.io/docker/image-size/toniblyx/prowler"></a>

|

||||

<a href="https://gallery.ecr.aws/o4g1s5r6/prowler"><img width="120" height=19" alt="AWS ECR Gallery" src="https://user-images.githubusercontent.com/3985464/151531396-b6535a68-c907-44eb-95a1-a09508178616.png"></a>

|

||||

<a href="https://github.com/prowler-cloud/prowler"><img alt="Repo size" src="https://img.shields.io/github/repo-size/prowler-cloud/prowler"></a>

|

||||

<a href="https://github.com/prowler-cloud/prowler"><img alt="Lines" src="https://img.shields.io/tokei/lines/github/prowler-cloud/prowler"></a>

|

||||

<a href="https://github.com/prowler-cloud/prowler/issues"><img alt="Issues" src="https://img.shields.io/github/issues/prowler-cloud/prowler"></a>

|

||||

<a href="https://github.com/prowler-cloud/prowler/releases"><img alt="Version" src="https://img.shields.io/github/v/release/prowler-cloud/prowler?include_prereleases"></a>

|

||||

<a href="https://github.com/prowler-cloud/prowler/releases"><img alt="Version" src="https://img.shields.io/github/release-date/prowler-cloud/prowler"></a>

|

||||

<a href="https://github.com/prowler-cloud/prowler"><img alt="Contributors" src="https://img.shields.io/github/contributors-anon/prowler-cloud/prowler"></a>

|

||||

<a href="https://github.com/prowler-cloud/prowler"><img alt="License" src="https://img.shields.io/github/license/prowler-cloud/prowler"></a>

|

||||

<a href="https://twitter.com/ToniBlyx"><img alt="Twitter" src="https://img.shields.io/twitter/follow/toniblyx?style=social"></a>

|

||||

</p>

|

||||

|

||||

# Prowler - AWS Security Tool

|

||||

<p align="center">

|

||||

<i>Prowler</i> is an Open Source security tool to perform AWS security best practices assessments, audits, incident response, continuous monitoring, hardening and forensics readiness. It contains more than 240 controls covering CIS, PCI-DSS, ISO27001, GDPR, HIPAA, FFIEC, SOC2, AWS FTR, ENS and custom security frameworks.

|

||||

</p>

|

||||

|

||||

[](https://discord.gg/UjSMCVnxSB)

|

||||

[](https://hub.docker.com/r/toniblyx/prowler)

|

||||

[](https://gallery.ecr.aws/o4g1s5r6/prowler)

|

||||

|

||||

|

||||

## Table of Contents

|

||||

|

||||

- [Description](#description)

|

||||

- [Prowler Container Versions](#prowler-container-versions)

|

||||

- [Features](#features)

|

||||

- [High level architecture](#high-level-architecture)

|

||||

- [Requirements and Installation](#requirements-and-installation)

|

||||

@@ -20,7 +41,8 @@

|

||||

- [Advanced Usage](#advanced-usage)

|

||||

- [Security Hub integration](#security-hub-integration)

|

||||

- [CodeBuild deployment](#codebuild-deployment)

|

||||

- [Whitelist/allowlist or remove FAIL from resources](#whitelist-or-allowlist-or-remove-a-fail-from-resources)

|

||||

- [Allowlist](#allowlist-or-remove-a-fail-from-resources)

|

||||

- [Inventory](#inventory)

|

||||

- [Fix](#how-to-fix-every-fail)

|

||||

- [Troubleshooting](#troubleshooting)

|

||||

- [Extras](#extras)

|

||||

@@ -29,7 +51,7 @@

|

||||

- [HIPAA Checks](#hipaa-checks)

|

||||

- [Trust Boundaries Checks](#trust-boundaries-checks)

|

||||

- [Multi Account and Continuous Monitoring](util/org-multi-account/README.md)

|

||||

- [Add Custom Checks](#add-custom-checks)

|

||||

- [Custom Checks](#custom-checks)

|

||||

- [Third Party Integrations](#third-party-integrations)

|

||||

- [Full list of checks and groups](/LIST_OF_CHECKS_AND_GROUPS.md)

|

||||

- [License](#license)

|

||||

@@ -38,21 +60,32 @@

|

||||

|

||||

Prowler is a command line tool that helps you with AWS security assessment, auditing, hardening and incident response.

|

||||

|

||||

It follows guidelines of the CIS Amazon Web Services Foundations Benchmark (49 checks) and has more than 100 additional checks including related to GDPR, HIPAA, PCI-DSS, ISO-27001, FFIEC, SOC2 and others.

|

||||

It follows guidelines of the CIS Amazon Web Services Foundations Benchmark (49 checks) and has more than 190 additional checks including related to GDPR, HIPAA, PCI-DSS, ISO-27001, FFIEC, SOC2 and others.

|

||||

|

||||

Read more about [CIS Amazon Web Services Foundations Benchmark v1.2.0 - 05-23-2018](https://d0.awsstatic.com/whitepapers/compliance/AWS_CIS_Foundations_Benchmark.pdf)

|

||||

|

||||

## Prowler container versions

|

||||

|

||||

The available versions of Prowler are the following:

|

||||

- latest: in sync with master branch (bear in mind that it is not a stable version)

|

||||

- <x.y.z> (release): you can find the releases [here](https://github.com/prowler-cloud/prowler/releases), those are stable releases.

|

||||

- stable: this tag always point to the latest release.

|

||||

|

||||

The container images are available here:

|

||||

- [DockerHub](https://hub.docker.com/r/toniblyx/prowler/tags)

|

||||

- [AWS Public ECR](https://gallery.ecr.aws/o4g1s5r6/prowler)

|

||||

|

||||

## Features

|

||||

|

||||

+200 checks covering security best practices across all AWS regions and most of AWS services and related to the next groups:

|

||||

+240 checks covering security best practices across all AWS regions and most of AWS services and related to the next groups:

|

||||

|

||||

- Identity and Access Management [group1]

|

||||

- Logging [group2]

|

||||

- Logging [group2]

|

||||

- Monitoring [group3]

|

||||

- Networking [group4]

|

||||

- CIS Level 1 [cislevel1]

|

||||

- CIS Level 2 [cislevel2]

|

||||

- Extras *see Extras section* [extras]

|

||||

- Extras _see Extras section_ [extras]

|

||||

- Forensics related group of checks [forensics-ready]

|

||||

- GDPR [gdpr] Read more [here](#gdpr-checks)

|

||||

- HIPAA [hipaa] Read more [here](#hipaa-checks)

|

||||

@@ -66,10 +99,11 @@ Read more about [CIS Amazon Web Services Foundations Benchmark v1.2.0 - 05-23-20

|

||||

With Prowler you can:

|

||||

|

||||

- Get a direct colorful or monochrome report

|

||||

- A HTML, CSV, JUNIT, JSON or JSON ASFF format report

|

||||

- Send findings directly to Security Hub

|

||||

- A HTML, CSV, JUNIT, JSON or JSON ASFF (Security Hub) format report

|

||||

- Send findings directly to the Security Hub

|

||||

- Run specific checks and groups or create your own

|

||||

- Check multiple AWS accounts in parallel or sequentially

|

||||

- Get an inventory of your AWS resources

|

||||

- And more! Read examples below

|

||||

|

||||

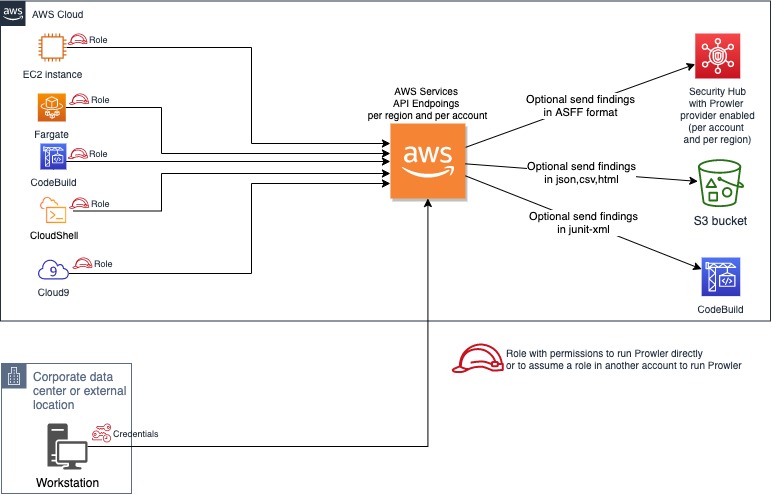

## High level architecture

|

||||

@@ -77,134 +111,151 @@ With Prowler you can:

|

||||

You can run Prowler from your workstation, an EC2 instance, Fargate or any other container, Codebuild, CloudShell and Cloud9.

|

||||

|

||||

|

||||

|

||||

## Requirements and Installation

|

||||

|

||||

Prowler has been written in bash using AWS-CLI and it works in Linux and OSX.

|

||||

Prowler has been written in bash using AWS-CLI underneath and it works in Linux, Mac OS or Windows with cygwin or virtualization. Also requires `jq` and `detect-secrets` to work properly.

|

||||

|

||||

- Make sure the latest version of AWS-CLI is installed on your workstation (it works with either v1 or v2), and other components needed, with Python pip already installed:

|

||||

- Make sure the latest version of AWS-CLI is installed. It works with either v1 or v2, however _latest v2 is recommended if using new regions since they require STS v2 token_, and other components needed, with Python pip already installed.

|

||||

|

||||

```sh

|

||||

pip install awscli

|

||||

```

|

||||

- For Amazon Linux (`yum` based Linux distributions and AWS CLI v2):

|

||||

```

|

||||

sudo yum update -y

|

||||

sudo yum remove -y awscli

|

||||

curl "https://awscli.amazonaws.com/awscli-exe-linux-x86_64.zip" -o "awscliv2.zip"

|

||||

unzip awscliv2.zip

|

||||

sudo ./aws/install

|

||||

sudo yum install -y python3 jq git

|

||||

sudo pip3 install detect-secrets==1.0.3

|

||||

git clone https://github.com/prowler-cloud/prowler

|

||||

```

|

||||

- For Ubuntu Linux (`apt` based Linux distributions and AWS CLI v2):

|

||||

|

||||

> NOTE: detect-secrets Yelp version is no longer supported the one from IBM is mantained now. Use the one mentioned below or the specific Yelp version 1.0.3 to make sure it works as expected (`pip install detect-secrets==1.0.3`):

|

||||

```sh

|

||||

pip install "git+https://github.com/ibm/detect-secrets.git@master#egg=detect-secrets"

|

||||

```

|

||||

```

|

||||

sudo apt update

|

||||

sudo apt install python3 python3-pip jq git zip

|

||||

pip install detect-secrets==1.0.3

|

||||

curl "https://awscli.amazonaws.com/awscli-exe-linux-x86_64.zip" -o "awscliv2.zip"

|

||||

unzip awscliv2.zip

|

||||

sudo ./aws/install

|

||||

git clone https://github.com/prowler-cloud/prowler

|

||||

```

|

||||

|

||||

AWS-CLI can be also installed it using "brew", "apt", "yum" or manually from <https://aws.amazon.com/cli/>, but `detect-secrets` has to be installed using `pip` or `pip3`. You will need to install `jq` to get the most from Prowler.

|

||||

> NOTE: detect-secrets Yelp version is no longer supported, the one from IBM is mantained now. Use the one mentioned below or the specific Yelp version 1.0.3 to make sure it works as expected (`pip install detect-secrets==1.0.3`):

|

||||

|

||||

- Make sure jq is installed: examples below with "apt" for Debian alike and "yum" for RedHat alike distros (like Amazon Linux):

|

||||

```sh

|

||||

pip install "git+https://github.com/ibm/detect-secrets.git@master#egg=detect-secrets"

|

||||

```

|

||||

|

||||

```sh

|

||||

sudo apt install jq

|

||||

```

|

||||

AWS-CLI can be also installed it using other methods, refer to official documentation for more details: <https://aws.amazon.com/cli/>, but `detect-secrets` has to be installed using `pip` or `pip3`.

|

||||

|

||||

```sh

|

||||

sudo yum install jq

|

||||

```

|

||||

- Once Prowler repository is cloned, get into the folder and you can run it:

|

||||

|

||||

- Previous steps, from your workstation:

|

||||

```sh

|

||||

cd prowler

|

||||

./prowler

|

||||

```

|

||||

|

||||

```sh

|

||||

git clone https://github.com/toniblyx/prowler

|

||||

cd prowler

|

||||

```

|

||||

- Since Prowler users AWS CLI under the hood, you can follow any authentication method as described [here](https://docs.aws.amazon.com/cli/latest/userguide/cli-configure-quickstart.html#cli-configure-quickstart-precedence). Make sure you have properly configured your AWS-CLI with a valid Access Key and Region or declare AWS variables properly (or instance profile/role):

|

||||

|

||||

- Since Prowler users AWS CLI under the hood, you can follow any authentication method as described [here](https://docs.aws.amazon.com/cli/latest/userguide/cli-configure-quickstart.html#cli-configure-quickstart-precedence). Make sure you have properly configured your AWS-CLI with a valid Access Key and Region or declare AWS variables properly (or intance profile):

|

||||

```sh

|

||||

aws configure

|

||||

```

|

||||

|

||||

```sh

|

||||

aws configure

|

||||

```

|

||||

or

|

||||

|

||||

or

|

||||

```sh

|

||||

export AWS_ACCESS_KEY_ID="ASXXXXXXX"

|

||||

export AWS_SECRET_ACCESS_KEY="XXXXXXXXX"

|

||||

export AWS_SESSION_TOKEN="XXXXXXXXX"

|

||||

```

|

||||

|

||||

```sh

|

||||

export AWS_ACCESS_KEY_ID="ASXXXXXXX"

|

||||

export AWS_SECRET_ACCESS_KEY="XXXXXXXXX"

|

||||

export AWS_SESSION_TOKEN="XXXXXXXXX"

|

||||

```

|

||||

- Those credentials must be associated to a user or role with proper permissions to do all checks. To make sure, add the AWS managed policies, SecurityAudit and ViewOnlyAccess, to the user or role being used. Policy ARNs are:

|

||||

|

||||

- Those credentials must be associated to a user or role with proper permissions to do all checks. To make sure, add the AWS managed policies, SecurityAudit and ViewOnlyAccess, to the user or role being used. Policy ARNs are:

|

||||

```sh

|

||||

arn:aws:iam::aws:policy/SecurityAudit

|

||||

arn:aws:iam::aws:policy/job-function/ViewOnlyAccess

|

||||

```

|

||||

|

||||

```sh

|

||||

arn:aws:iam::aws:policy/SecurityAudit

|

||||

arn:aws:iam::aws:policy/job-function/ViewOnlyAccess

|

||||

```

|

||||

|

||||

> Additional permissions needed: to make sure Prowler can scan all services included in the group *Extras*, make sure you attach also the custom policy [prowler-additions-policy.json](https://github.com/toniblyx/prowler/blob/master/iam/prowler-additions-policy.json) to the role you are using. If you want Prowler to send findings to [AWS Security Hub](https://aws.amazon.com/security-hub), make sure you also attach the custom policy [prowler-security-hub.json](https://github.com/toniblyx/prowler/blob/master/iam/prowler-security-hub.json).

|

||||

> Additional permissions needed: to make sure Prowler can scan all services included in the group _Extras_, make sure you attach also the custom policy [prowler-additions-policy.json](https://github.com/prowler-cloud/prowler/blob/master/iam/prowler-additions-policy.json) to the role you are using. If you want Prowler to send findings to [AWS Security Hub](https://aws.amazon.com/security-hub), make sure you also attach the custom policy [prowler-security-hub.json](https://github.com/prowler-cloud/prowler/blob/master/iam/prowler-security-hub.json).

|

||||

|

||||

## Usage

|

||||

|

||||

1. Run the `prowler` command without options (it will use your environment variable credentials if they exist or will default to using the `~/.aws/credentials` file and run checks over all regions when needed. The default region is us-east-1):

|

||||

|

||||

```sh

|

||||

./prowler

|

||||

```

|

||||

```sh

|

||||

./prowler

|

||||

```

|

||||

|

||||

Use `-l` to list all available checks and the groups (sections) that reference them. To list all groups use `-L` and to list content of a group use `-l -g <groupname>`.

|

||||

Use `-l` to list all available checks and the groups (sections) that reference them. To list all groups use `-L` and to list content of a group use `-l -g <groupname>`.

|

||||

|

||||

If you want to avoid installing dependencies run it using Docker:

|

||||

If you want to avoid installing dependencies run it using Docker:

|

||||

|

||||

```sh

|

||||

docker run -ti --rm --name prowler --env AWS_ACCESS_KEY_ID --env AWS_SECRET_ACCESS_KEY --env AWS_SESSION_TOKEN toniblyx/prowler:latest

|

||||

```

|

||||

```sh

|

||||

docker run -ti --rm --name prowler --env AWS_ACCESS_KEY_ID --env AWS_SECRET_ACCESS_KEY --env AWS_SESSION_TOKEN toniblyx/prowler:latest

|

||||

```

|

||||

|

||||

In case you want to get reports created by Prowler use docker volume option like in the example below:

|

||||

|

||||

```sh

|

||||

docker run -ti --rm -v /your/local/output:/prowler/output --name prowler --env AWS_ACCESS_KEY_ID --env AWS_SECRET_ACCESS_KEY --env AWS_SESSION_TOKEN toniblyx/prowler:latest -g hipaa -M csv,json,html

|

||||

```

|

||||

|

||||

1. For custom AWS-CLI profile and region, use the following: (it will use your custom profile and run checks over all regions when needed):

|

||||

|

||||

```sh

|

||||

./prowler -p custom-profile -r us-east-1

|

||||

```

|

||||

```sh

|

||||

./prowler -p custom-profile -r us-east-1

|

||||

```

|

||||

|

||||

1. For a single check use option `-c`:

|

||||

|

||||

```sh

|

||||

./prowler -c check310

|

||||

```

|

||||

```sh

|

||||

./prowler -c check310

|

||||

```

|

||||

|

||||

With Docker:

|

||||

With Docker:

|

||||

|

||||

```sh

|

||||

docker run -ti --rm --name prowler --env AWS_ACCESS_KEY_ID --env AWS_SECRET_ACCESS_KEY --env AWS_SESSION_TOKEN toniblyx/prowler:latest "-c check310"

|

||||

```

|

||||

```sh

|

||||

docker run -ti --rm --name prowler --env AWS_ACCESS_KEY_ID --env AWS_SECRET_ACCESS_KEY --env AWS_SESSION_TOKEN toniblyx/prowler:latest "-c check310"

|

||||

```

|

||||

|

||||

or multiple checks separated by comma:

|

||||

or multiple checks separated by comma:

|

||||

|

||||

```sh

|

||||

./prowler -c check310,check722

|

||||

```

|

||||

```sh

|

||||

./prowler -c check310,check722

|

||||

```

|

||||

|

||||

or all checks but some of them:

|

||||

or all checks but some of them:

|

||||

|

||||

```sh

|

||||

./prowler -E check42,check43

|

||||

```

|

||||

```sh

|

||||

./prowler -E check42,check43

|

||||

```

|

||||

|

||||

or for custom profile and region:

|

||||

or for custom profile and region:

|

||||

|

||||

```sh

|

||||

./prowler -p custom-profile -r us-east-1 -c check11

|

||||

```

|

||||

```sh

|

||||

./prowler -p custom-profile -r us-east-1 -c check11

|

||||

```

|

||||

|

||||

or for a group of checks use group name:

|

||||

or for a group of checks use group name:

|

||||

|

||||

```sh

|

||||

./prowler -g group1 # for iam related checks

|

||||

```

|

||||

```sh

|

||||

./prowler -g group1 # for iam related checks

|

||||

```

|

||||

|

||||

or exclude some checks in the group:

|

||||

or exclude some checks in the group:

|

||||

|

||||

```sh

|

||||

./prowler -g group4 -E check42,check43

|

||||

```

|

||||

```sh

|

||||

./prowler -g group4 -E check42,check43

|

||||

```

|

||||

|

||||

Valid check numbers are based on the AWS CIS Benchmark guide, so 1.1 is check11 and 3.10 is check310

|

||||

Valid check numbers are based on the AWS CIS Benchmark guide, so 1.1 is check11 and 3.10 is check310

|

||||

|

||||

### Regions

|

||||

|

||||

By default, Prowler scans all opt-in regions available, that might take a long execution time depending on the number of resources and regions used. Same applies for GovCloud or China regions. See below Advance usage for examples.

|

||||

|

||||

Prowler has two parameters related to regions: `-r` that is used query AWS services API endpoints (it uses `us-east-1` by default and required for GovCloud or China) and the option `-f` that is to filter those regions you only want to scan. For example if you want to scan Dublin only use `-f eu-west-1` and if you want to scan Dublin and Ohio `-f 'eu-west-1 us-east-s'`, note the single quotes and space between regions.

|

||||

Prowler has two parameters related to regions: `-r` that is used query AWS services API endpoints (it uses `us-east-1` by default and required for GovCloud or China) and the option `-f` that is to filter those regions you only want to scan. For example if you want to scan Dublin only use `-f eu-west-1` and if you want to scan Dublin and Ohio `-f eu-west-1,us-east-1`, note the regions are separated by a comma delimiter (it can be used as before with `-f 'eu-west-1,us-east-1'`).

|

||||

|

||||

## Screenshots

|

||||

|

||||

@@ -216,7 +267,7 @@ Prowler has two parameters related to regions: `-r` that is used query AWS servi

|

||||

|

||||

<img width="900" alt="Prowler html" src="https://user-images.githubusercontent.com/3985464/141443976-41d32cc2-533d-405a-92cb-affc3995d6ec.png">

|

||||

|

||||

- Sample screenshot of the Quicksight dashboard, see [https://quicksight-security-dashboard.workshop.aws](quicksight-security-dashboard.workshop.aws/):

|

||||

- Sample screenshot of the Quicksight dashboard, see [quicksight-security-dashboard.workshop.aws](https://quicksight-security-dashboard.workshop.aws/):

|

||||

|

||||

<img width="900" alt="Prowler with Quicksight" src="https://user-images.githubusercontent.com/3985464/128932819-0156e838-286d-483c-b953-fda68a325a3d.png">

|

||||

|

||||

@@ -228,80 +279,260 @@ Prowler has two parameters related to regions: `-r` that is used query AWS servi

|

||||

|

||||

1. If you want to save your report for later analysis thare are different ways, natively (supported text, mono, csv, json, json-asff, junit-xml and html, see note below for more info):

|

||||

|

||||

```sh

|

||||

./prowler -M csv

|

||||

```

|

||||

```sh

|

||||

./prowler -M csv

|

||||

```

|

||||

|

||||

or with multiple formats at the same time:

|

||||

or with multiple formats at the same time:

|

||||

|

||||

```sh

|

||||

./prowler -M csv,json,json-asff,html

|

||||

```

|

||||

```sh

|

||||

./prowler -M csv,json,json-asff,html

|

||||

```

|

||||

|

||||

or just a group of checks in multiple formats:

|

||||

or just a group of checks in multiple formats:

|

||||

|

||||

```sh

|

||||

./prowler -g gdpr -M csv,json,json-asff

|

||||

```

|

||||

```sh

|

||||

./prowler -g gdpr -M csv,json,json-asff

|

||||

```

|

||||

|

||||

or if you want a sorted and dynamic HTML report do:

|

||||

or if you want a sorted and dynamic HTML report do:

|

||||

|

||||

```sh

|

||||

./prowler -M html

|

||||

```

|

||||

```sh

|

||||

./prowler -M html

|

||||

```

|

||||

|

||||

Now `-M` creates a file inside the prowler `output` directory named `prowler-output-AWSACCOUNTID-YYYYMMDDHHMMSS.format`. You don't have to specify anything else, no pipes, no redirects.

|

||||

Now `-M` creates a file inside the prowler `output` directory named `prowler-output-AWSACCOUNTID-YYYYMMDDHHMMSS.format`. You don't have to specify anything else, no pipes, no redirects.

|

||||

|

||||

or just saving the output to a file like below:

|

||||

or just saving the output to a file like below:

|

||||

|

||||

```sh

|

||||

./prowler -M mono > prowler-report.txt

|

||||

```

|

||||

```sh

|

||||

./prowler -M mono > prowler-report.txt

|

||||

```

|

||||

|

||||

To generate JUnit report files, include the junit-xml format. This can be combined with any other format. Files are written inside a prowler root directory named `junit-reports`:

|

||||

To generate JUnit report files, include the junit-xml format. This can be combined with any other format. Files are written inside a prowler root directory named `junit-reports`:

|

||||

|

||||

```sh

|

||||

./prowler -M text,junit-xml

|

||||

```

|

||||

```sh

|

||||

./prowler -M text,junit-xml

|

||||

```

|

||||

|

||||

>Note about output formats to use with `-M`: "text" is the default one with colors, "mono" is like default one but monochrome, "csv" is comma separated values, "json" plain basic json (without comma between lines) and "json-asff" is also json with Amazon Security Finding Format that you can ship to Security Hub using `-S`.

|

||||

> Note about output formats to use with `-M`: "text" is the default one with colors, "mono" is like default one but monochrome, "csv" is comma separated values, "json" plain basic json (without comma between lines) and "json-asff" is also json with Amazon Security Finding Format that you can ship to Security Hub using `-S`.

|

||||

|

||||

or save your report in an S3 bucket (this only works for text or mono. For csv, json or json-asff it has to be copied afterwards):

|

||||

To save your report in an S3 bucket, use `-B` to define a custom output bucket along with `-M` to define the output format that is going to be uploaded to S3:

|

||||

|

||||

```sh

|

||||

./prowler -M mono | aws s3 cp - s3://bucket-name/prowler-report.txt

|

||||

```

|

||||

```sh

|

||||

./prowler -M csv -B my-bucket/folder/

|

||||

```

|

||||

|

||||

When generating multiple formats and running using Docker, to retrieve the reports, bind a local directory to the container, e.g.:

|

||||

> In the case you do not want to use the assumed role credentials but the initial credentials to put the reports into the S3 bucket, use `-D` instead of `-B`. Make sure that the used credentials have s3:PutObject permissions in the S3 path where the reports are going to be uploaded.

|

||||

|

||||

```sh

|

||||

docker run -ti --rm --name prowler --volume "$(pwd)":/prowler/output --env AWS_ACCESS_KEY_ID --env AWS_SECRET_ACCESS_KEY --env AWS_SESSION_TOKEN toniblyx/prowler:latest -M csv,json

|

||||

```

|

||||

When generating multiple formats and running using Docker, to retrieve the reports, bind a local directory to the container, e.g.:

|

||||

|

||||

```sh

|

||||

docker run -ti --rm --name prowler --volume "$(pwd)":/prowler/output --env AWS_ACCESS_KEY_ID --env AWS_SECRET_ACCESS_KEY --env AWS_SESSION_TOKEN toniblyx/prowler:latest -M csv,json

|

||||

```

|

||||

|

||||

1. To perform an assessment based on CIS Profile Definitions you can use cislevel1 or cislevel2 with `-g` flag, more information about this [here, page 8](https://d0.awsstatic.com/whitepapers/compliance/AWS_CIS_Foundations_Benchmark.pdf):

|

||||

|

||||

```sh

|

||||

./prowler -g cislevel1

|

||||

```

|

||||

```sh

|

||||

./prowler -g cislevel1

|

||||

```

|

||||

|

||||

1. If you want to run Prowler to check multiple AWS accounts in parallel (runs up to 4 simultaneously `-P 4`) but you may want to read below in Advanced Usage section to do so assuming a role:

|

||||

|

||||

```sh

|

||||

grep -E '^\[([0-9A-Aa-z_-]+)\]' ~/.aws/credentials | tr -d '][' | shuf | \

|

||||

xargs -n 1 -L 1 -I @ -r -P 4 ./prowler -p @ -M csv 2> /dev/null >> all-accounts.csv

|

||||

```

|

||||

```sh

|

||||

grep -E '^\[([0-9A-Aa-z_-]+)\]' ~/.aws/credentials | tr -d '][' | shuf | \

|

||||

xargs -n 1 -L 1 -I @ -r -P 4 ./prowler -p @ -M csv 2> /dev/null >> all-accounts.csv

|

||||

```

|

||||

|

||||

1. For help about usage run:

|

||||

|

||||

```

|

||||

./prowler -h

|

||||

```

|

||||

```

|

||||

./prowler -h

|

||||

```

|

||||

|

||||

## Database providers connector

|

||||

|

||||

You can send the Prowler's output to different databases (right now only PostgreSQL is supported).

|

||||

|

||||

Jump into the section for the database provider you want to use and follow the required steps to configure it.

|

||||

|

||||

### PostgreSQL

|

||||

|

||||

Install psql

|

||||

|

||||

- Mac -> `brew install libpq`

|

||||

- Ubuntu -> `sudo apt-get install postgresql-client `

|

||||

- RHEL/Centos -> `sudo yum install postgresql10`

|

||||

|

||||

#### Audit ID Field

|

||||

|

||||

To use Prowler postgres connector it is needed to set the -u flag to include `audit_id` field into the query. This field helps to identify each audit that has been made in the database. This field needs to be an UUID V4 to match the table schema.

|

||||

For example:

|

||||

```

|

||||

./prowler -M csv -d postgresql -u e5a0f214-8bf9-4600-a0c3-ff659b30e6c0

|

||||

```

|

||||

|

||||

#### Credentials

|

||||

|

||||

There are two options to pass the PostgreSQL credentials to Prowler:

|

||||

|

||||

##### Using a .pgpass file

|

||||

|

||||

Configure a `~/.pgpass` file into the root folder of the user that is going to launch Prowler ([pgpass file doc](https://www.postgresql.org/docs/current/libpq-pgpass.html)), including an extra field at the end of the line, separated by `:`, to name the table, using the following format:

|

||||

`hostname:port:database:username:password:table`

|

||||

|

||||

##### Using environment variables

|

||||

|

||||

- Configure the following environment variables:

|

||||

- `POSTGRES_HOST`

|

||||

- `POSTGRES_PORT`

|

||||

- `POSTGRES_USER`

|

||||

- `POSTGRES_PASSWORD`

|

||||

- `POSTGRES_DB`

|

||||

- `POSTGRES_TABLE`

|

||||

> _Note_: If you are using a schema different than postgres please include it at the beginning of the `POSTGRES_TABLE` variable, like: `export POSTGRES_TABLE=prowler.findings`

|

||||

|

||||

Also you need to have enabled the `uuid` postgresql extension, to enable it:

|

||||

|

||||

`CREATE EXTENSION IF NOT EXISTS "uuid-ossp";`

|

||||

|

||||

Create a table in your PostgreSQL database to store the Prowler's data. You can use the following SQL statement to create the table:

|

||||

|

||||

```

|

||||

CREATE TABLE IF NOT EXISTS prowler_findings (

|

||||

id uuid,

|

||||

audit_id uuid ,

|

||||

profile text,

|

||||

account_number text,

|

||||

region text,

|

||||

check_id text,

|

||||

result text,

|

||||

item_scored text,

|

||||

item_level text,

|

||||

check_title text,

|

||||

result_extended text,

|

||||

check_asff_compliance_type text,

|

||||

severity text,

|

||||

service_name text,

|

||||

check_asff_resource_type text,

|

||||

check_asff_type text,

|

||||

risk text,

|

||||

remediation text,

|

||||

documentation text,

|

||||

check_caf_epic text,

|

||||

resource_id text,

|

||||

account_details_email text,

|

||||

account_details_name text,

|

||||

account_details_arn text,

|

||||

account_details_org text,

|

||||

account_details_tags text,

|

||||

prowler_start_time text

|

||||

);

|

||||

```

|

||||

|

||||

- Execute Prowler with `-d` flag, for example:

|

||||

`./prowler -M csv -d postgresql -u e5a0f214-8bf9-4600-a0c3-ff659b30e6c0`

|

||||

> _Note_: This command creates a `csv` output file and stores the Prowler output in the configured PostgreSQL DB. It's an example, `-d` flag **does not** require `-M` to run.

|

||||

|

||||

## Output Formats

|

||||

|

||||

Prowler supports natively the following output formats:

|

||||

|

||||

- CSV

|

||||

- JSON

|

||||

- JSON-ASFF

|

||||

- HTML

|

||||

- JUNIT-XML

|

||||

|

||||

Hereunder is the structure for each of them

|

||||

|

||||

### CSV

|

||||

|

||||

| PROFILE | ACCOUNT_NUM | REGION | TITLE_ID | CHECK_RESULT | ITEM_SCORED | ITEM_LEVEL | TITLE_TEXT | CHECK_RESULT_EXTENDED | CHECK_ASFF_COMPLIANCE_TYPE | CHECK_SEVERITY | CHECK_SERVICENAME | CHECK_ASFF_RESOURCE_TYPE | CHECK_ASFF_TYPE | CHECK_RISK | CHECK_REMEDIATION | CHECK_DOC | CHECK_CAF_EPIC | CHECK_RESOURCE_ID | PROWLER_START_TIME | ACCOUNT_DETAILS_EMAIL | ACCOUNT_DETAILS_NAME | ACCOUNT_DETAILS_ARN | ACCOUNT_DETAILS_ORG | ACCOUNT_DETAILS_TAGS |

|

||||

| ------- | ----------- | ------ | -------- | ------------ | ----------- | ---------- | ---------- | --------------------- | -------------------------- | -------------- | ----------------- | ------------------------ | --------------- | ---------- | ----------------- | --------- | -------------- | ----------------- | ------------------ | --------------------- | -------------------- | ------------------- | ------------------- | -------------------- |

|

||||

|

||||

### JSON

|

||||

|

||||

```

|

||||

{

|

||||

"Profile": "ENV",

|

||||

"Account Number": "1111111111111",

|

||||

"Control": "[check14] Ensure access keys are rotated every 90 days or less",

|

||||

"Message": "us-west-2: user has not rotated access key 2 in over 90 days",

|

||||

"Severity": "Medium",

|

||||

"Status": "FAIL",

|

||||

"Scored": "",

|

||||

"Level": "CIS Level 1",

|

||||

"Control ID": "1.4",

|

||||

"Region": "us-west-2",

|

||||

"Timestamp": "2022-05-18T10:33:48Z",

|

||||

"Compliance": "ens-op.acc.1.aws.iam.4 ens-op.acc.5.aws.iam.3",

|

||||

"Service": "iam",

|

||||

"CAF Epic": "IAM",

|

||||

"Risk": "Access keys consist of an access key ID and secret access key which are used to sign programmatic requests that you make to AWS. AWS users need their own access keys to make programmatic calls to AWS from the AWS Command Line Interface (AWS CLI)- Tools for Windows PowerShell- the AWS SDKs- or direct HTTP calls using the APIs for individual AWS services. It is recommended that all access keys be regularly rotated.",

|

||||

"Remediation": "Use the credential report to ensure access_key_X_last_rotated is less than 90 days ago.",

|

||||

"Doc link": "https://docs.aws.amazon.com/IAM/latest/UserGuide/id_credentials_getting-report.html",

|

||||

"Resource ID": "terraform-user",

|

||||

"Account Email": "",

|

||||

"Account Name": "",

|

||||

"Account ARN": "",

|

||||

"Account Organization": "",

|

||||

"Account tags": ""

|

||||

}

|

||||

```

|

||||

|

||||

> NOTE: Each finding is a `json` object.

|

||||

|

||||

### JSON-ASFF

|

||||

|

||||

```

|

||||

{

|

||||

"SchemaVersion": "2018-10-08",

|

||||

"Id": "prowler-1.4-1111111111111-us-west-2-us-west-2_user_has_not_rotated_access_key_2_in_over_90_days",

|

||||

"ProductArn": "arn:aws:securityhub:us-west-2::product/prowler/prowler",

|

||||

"RecordState": "ACTIVE",

|

||||

"ProductFields": {

|

||||

"ProviderName": "Prowler",

|

||||

"ProviderVersion": "2.9.0-13April2022",

|

||||

"ProwlerResourceName": "user"

|

||||

},

|

||||

"GeneratorId": "prowler-check14",

|

||||

"AwsAccountId": "1111111111111",

|

||||

"Types": [

|

||||

"ens-op.acc.1.aws.iam.4 ens-op.acc.5.aws.iam.3"

|

||||

],

|

||||

"FirstObservedAt": "2022-05-18T10:33:48Z",

|

||||

"UpdatedAt": "2022-05-18T10:33:48Z",

|

||||

"CreatedAt": "2022-05-18T10:33:48Z",

|

||||

"Severity": {

|

||||

"Label": "MEDIUM"

|

||||

},

|

||||

"Title": "iam.[check14] Ensure access keys are rotated every 90 days or less",

|

||||

"Description": "us-west-2: user has not rotated access key 2 in over 90 days",

|

||||

"Resources": [

|

||||

{

|

||||

"Type": "AwsIamUser",

|

||||

"Id": "user",

|

||||

"Partition": "aws",

|

||||

"Region": "us-west-2"

|

||||

}

|

||||

],

|

||||

"Compliance": {

|

||||

"Status": "FAILED",

|

||||

"RelatedRequirements": [

|

||||

"ens-op.acc.1.aws.iam.4 ens-op.acc.5.aws.iam.3"

|

||||

]

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

> NOTE: Each finding is a `json` object.

|

||||

|

||||

## Advanced Usage

|

||||

|

||||

### Assume Role:

|

||||

|

||||